Inside Claude Code With Its Creator Boris Cherny

YouTube · PQU9o_5rHC4

Quick Read

Summary

Takeaways

- ❖Anthropic's core philosophy is to build for LLM capabilities expected six months in the future, not current limitations.

- ❖Claude Code originated accidentally as a simple terminal chat app for API testing.

- ❖The 'AGI moment' for Boris was seeing the model independently use bash tools to script his Mac.

- ❖Product development for Claude Code is driven entirely by 'latent demand' observed from user behavior.

- ❖The `claude.md` file should be kept short and focused, often deleted and rebuilt with new models.

- ❖Anthropic engineers' productivity increased 150% since Claude Code's release, with some coding 100% via Claude.

- ❖The 'software engineer' title is expected to disappear as coding becomes generally solved by AI.

- ❖Claude Code's plugins feature was built entirely by a swarm of agents over a weekend with minimal human intervention.

Insights

1Anticipating Future LLM Capabilities is Paramount

Anthropic's development strategy for Claude Code is to build for the model expected six months in the future, not the current one. This means identifying frontiers where the current model is weak, knowing it will improve, and avoiding over-engineering scaffolding that will soon be rendered obsolete by model advancements.

We don't build for the model of today. We build for the model six months from now... Try to think about what is that frontier where the model is not very good at today cuz it's going to get good at it.

2Claude Code's Accidental Genesis and the 'AGI Moment'

Claude Code started as a simple terminal chat app built by Boris Cherny to understand Anthropic's API. The pivotal 'AGI moment' occurred when he gave the model a bash tool, and it independently wrote AppleScript to identify music playing on his Mac, demonstrating an unexpected desire and capability for tool use.

It was so accidental that it just kind of evolved into this... I just built like a little terminal app to use the API... I asked her, 'What music am I listening to?' He wrote some like Apple script to script my my Mac... And this was Sauna 3.5... That was my first I think ever fuel the AGI moment where I was just like, 'Oh my god, the model it just wants to use tools. That's all it wants.'

3Product Development Driven by Latent Demand

Every feature in Claude Code, including 'Plan Mode' and `claude.md`, emerged from observing how users were already trying to interact with the model. Instead of inventing new workflows, the team identified existing user behaviors and built tools to make those easier.

Probably the single for me biggest principle in product is latent demand... every bit of this product is built through latent demand... Plan mode was we saw users that were like hey quad come up with an idea plan this out but don't write any code yet.

4The Ephemeral Nature of LLM-Driven Codebases

Claude Code's codebase is in constant flux, with the entire product being rewritten and tools un-shipped or added every few weeks. There is no part of Claude Code that existed six months prior, reflecting the rapid evolution of underlying models and the need to shed obsolete scaffolding.

All of Quad Code has just been written and rewritten and rewritten and rewritten over and over and over. There is no part of Quad Code that was around 6 months ago... We unhip tools every couple weeks. We add new tools every couple weeks.

5Dramatic Productivity Gains with AI Agents

Anthropic engineers have seen a 150% increase in productivity per engineer since Claude Code's release, measured by pull requests and commits. Boris Cherny personally codes 100% with Claude Code, landing 20 PRs daily without touching an IDE. This contrasts sharply with traditional 2% annual productivity gains at large tech companies.

Productivity per engineer at anthropic has grown 150%... For me personally it's been 100% for like since Opus 4.5. I just I uninstalled my IDE. I don't edit a single line of code by hand. It's just 100% quad code and Opus. I land you know like 20 PR a day every day.

6Agent Topologies for Complex Tasks

Claude Code leverages 'sub-agents' (recursive Claude Code instances) to tackle complex problems like debugging or building new features. A 'mama quad' agent can spawn multiple sub-agents to research in parallel, coordinate tasks via tools like Asana, and even build entire features like the plugins system with minimal human oversight.

The majority of agents are actually prompted by quad today in the form of sub agents... An engineer on the team just gave Quad a spec and told Quad to use a Asana board and then Quad just put up a bunch of tickets on a sauna and then spawned a bunch of agents and the agent started picking up tasks.

Bottom Line

The host suggests that Claude Code transcripts could be used to evaluate engineering candidates, revealing their thinking process, debugging skills, use of plan mode, and understanding of systems, potentially creating a 'spiderweb graph' of their AI coding skill level.

This could revolutionize technical hiring, shifting focus from traditional coding challenges to how effectively candidates collaborate with AI.

Develop tools or platforms for analyzing and scoring AI-assisted coding transcripts for recruitment.

Claude agents at Anthropic already communicate with each other, talk to internal users on Slack, and even tweet (though Boris deletes the tweets due to tone). They can proactively message engineers with clarifying questions based on git history.

This indicates a future where AI agents are not just tools but active participants in communication and workflow, blurring the lines between human and AI interaction.

Build AI-driven communication and collaboration platforms that integrate deeply with development and operational workflows, enabling autonomous information gathering and dissemination.

Despite initial expectations that Claude Code would quickly move beyond the terminal, its simple, elegant form factor has proven surprisingly effective and delightful for developers, demonstrating that traditional interfaces can be powerfully reimagined with AI.

This challenges assumptions about necessary UI complexity for advanced AI tools, suggesting that minimalist, text-based interfaces can still offer high utility and user satisfaction.

Explore AI-enhanced command-line interfaces or text-based tools for complex domains, focusing on delight and efficiency within constrained environments.

Opportunities

AI-Driven Developer Productivity Platform

A platform that integrates LLMs deeply into the development workflow, offering features like automated git operations, unit test generation, bug fixing, and production debugging, all accessible via a unified interface (e.g., terminal or desktop app). The platform would prioritize rapid iteration and adaptability to new model capabilities.

AI Agent Orchestration and Collaboration Platform

A service that allows users to define complex tasks, specify agent topologies, and deploy swarms of AI agents to collaborate on projects, managing their context windows, communication, and task assignment (e.g., via Asana boards). This platform would enable 'mama quad' like capabilities, where a primary agent can spawn and manage sub-agents, each with fresh context, to tackle different parts of a larger problem, facilitating parallel research and development.

AI-Enhanced Hiring & Skill Assessment Tools

A tool that analyzes transcripts of candidates interacting with AI coding agents (e.g., Claude Code, CodeX) to assess their problem-solving approach, debugging skills, use of plan mode, and overall 'AI coding skill level' through a structured evaluation framework. This would provide objective, behavioral data on how engineers leverage AI, moving beyond traditional coding interviews to evaluate collaboration with intelligent tools.

Key Concepts

Build for the Model Six Months From Now

Instead of optimizing for current LLM limitations, anticipate future capabilities and design products that will be relevant when models improve. This avoids wasted effort on scaffolding that becomes obsolete with the next model release.

Latent Demand

Product development should focus on making easier things that users are already trying to do, rather than trying to make them do new things. Observe user behavior closely to identify these unarticulated needs.

The Bitter Lesson (Never Bet Against the Model)

A principle from Rich Sutton stating that more general methods will always outperform specific ones. In AI, this means assuming the model will eventually solve problems currently requiring complex scaffolding, leading to a preference for waiting for model improvements over extensive custom engineering.

Lessons

- When building on LLMs, design for the capabilities of models expected six months in the future, not current limitations, to avoid building obsolete scaffolding.

- Prioritize identifying and addressing 'latent demand' by observing how users are already trying to use AI, rather than trying to force new workflows.

- Embrace a scientific, first-principles mindset and be willing to discard strong opinions, as LLM capabilities rapidly change what is relevant or effective in engineering.

- Keep AI agent instructions (like `claude.md`) minimal; delete and rebuild them with new model releases, adding instructions only when the model deviates.

- Leverage agent topologies and sub-agents for complex tasks, calibrating the number of parallel agents based on task difficulty to enhance research and debugging.

Rapid LLM Product Development Cycle

**Anticipate Future Model**: Identify areas where current LLMs are weak but are likely to improve significantly in 3-6 months. Build your product to leverage these anticipated future capabilities.

**Observe Latent Demand**: Continuously watch how users (or internal teams) are trying to use the model, even if it's cumbersome. Identify repeated patterns or workarounds.

**Prototype Rapidly**: Build minimal prototypes (e.g., in a terminal) to test new ideas quickly. Aim for 20 prototypes in a few hours, not 3 in two weeks.

**Iterate and Ship Based on Feedback**: Give prototypes to users immediately, gather feedback (GitHub issues, Slack, direct observation), and iterate rapidly. Ship small, frequent updates.

**Prune Scaffolding**: Regularly review and delete custom code or 'scaffolding' that the latest model can now handle natively, trusting the model's increasing generality.

**Rewrite Frequently**: Be prepared to rewrite significant portions, or even the entire codebase, every few months as models evolve and new capabilities emerge.

Notable Moments

Boris describes his first 'AGI moment' when Claude 3.5, given a bash tool, wrote AppleScript to identify music playing on his Mac, an unexpected and powerful demonstration of tool use.

This highlights the emergent and often surprising capabilities of LLMs, particularly their desire to use tools, which was a core insight for Claude Code's development.

An engineer on Boris's team enabled Claude Code to write its own tools and implement new features, demonstrating a meta-level of AI automation.

This illustrates the potential for AI to accelerate its own development and feature creation, moving beyond simply assisting humans to autonomously building components.

Claude Code was used by NASA to plot the course for the Perseverance Mars rover.

This provides a concrete, high-stakes example of Claude Code's real-world utility and reliability, underscoring its impact beyond typical software development.

Quotes

"We don't build for the model of today. We build for the model six months from now."

"Oh my god, the model it just wants to use tools. That's all it wants."

"Probably the single for me biggest principle in product is latent demand."

"There is no part of Quad Code that was around 6 months ago. It's just constantly rewritten."

"I just I uninstalled my IDE. I don't edit a single line of code by hand. It's just 100% quad code and Opus."

"I think what will happen is coding will be generally solved for everyone... I think we're going to start to see the title software engineer go away."

Q&A

Recent Questions

Related Episodes

Code Health Guardian

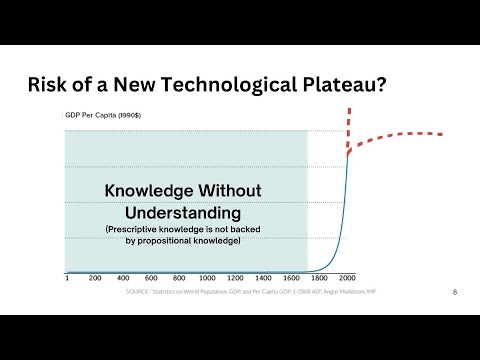

"This talk introduces a comprehensive model for understanding and managing code complexity, arguing for its objective nature and the critical role of human understanding in the AI era to maintain software health."

Joe Rogan Experience #2501 - Marc Andreessen

"Marc Andreessen details how AI is rapidly transforming society, offering 'universal basic superpowers' while highlighting critical political and economic policies that threaten American innovation and safety."

The productivity advice that will actually improve your life | Chris Bailey: Full Interview

"Chris Bailey, author of 'Intentional' and 'Hyperfocus,' reveals how intentionality, aligning goals with core values, and mastering focus are the true drivers of productivity, not generic advice or willpower."

Thin Harness, Fat Skills: The New Way To Build Software

"Gary Tan, YC CEO, details how he shipped hundreds of thousands of lines of code in months after a 13-year hiatus, leveraging AI agents and a "thin harness, fat skills" approach to achieve 400x productivity."