Privacy Ripple Effects from Adding or Removing Personal Information in Language Model Training

YouTube · IzIsHFCqXGo

Quick Read

Summary

Takeaways

- ❖LLMs are increasingly trained on sensitive data (e.g., EHRs, private emails) and are prone to memorizing and regurgitating it.

- ❖The research introduces 'assisted memorization,' where PII not extractable at an early checkpoint becomes extractable at a later one.

- ❖Assisted memorization is not merely delayed memorization; it is triggered by training on data containing overlapping engrams (partial duplicates) with the PII.

- ❖Removing overlapping engrams significantly reduces assisted memorization, indicating their causal role.

- ❖A logistic regression model predicts assisted memorization based on engram statistics, last name counts, and domain counts.

- ❖Adding more PII to training data (PII opt-ins) leads to a substantial increase in overall PII extraction, particularly with top-k sampling.

- ❖The inclusion of new PII also increases the risk of extraction for *existing* PII that was already in the training set.

- ❖Removing memorized PII (PII opt-outs) can inadvertently trigger the extraction of a new 'layer' of previously unextracted PII, akin to a 'privacy onion' effect.

- ❖The choice of decoding method significantly impacts PII extraction; top-k sampling extracts many more emails than greedy decoding.

- ❖Memorization audits should evaluate examples not currently extracted, as they may become vulnerable later due to assisted memorization.

Insights

1Assisted Memorization: PII Can Become Extractable Later in Training

The research identifies 'assisted memorization,' where PII seen early in training is not immediately memorized or extractable, but becomes so at a later checkpoint as training progresses. This challenges the assumption that older data is less vulnerable to extraction.

Examples seen early in training may still remain vulnerable to extraction at later stages. Models on average show equal or more assisted memorization than immediate memorization, with the effect increasing with model scale (e.g., GPT-2 large vs. small).

2Overlapping Engrams Drive Assisted Memorization

Assisted memorization is primarily triggered by training on new data that contains overlapping engrams (sequences of tokens) with the previously unmemorized PII. These partial duplicates or related fragments 'assist' the model in later recalling the full PII.

When a model is trained on data with overlapping engrams (e.g., 'John McCarthy' when 'Elisabeth.McCarthy' was previously unmemorized), the original PII becomes extractable. Removing these overlapping engrams from training batches reduced assisted memorization from 177 emails to only 10.

3Adding PII Increases Overall and Existing Data Extraction Risk

Incrementally adding more PII to an LLM's training data significantly increases the total extractability of PII. This effect is super-linear for top-k sampling. Furthermore, adding new PII also increases the risk of extraction for PII that was already present in the training data.

As the percentage of PII in the training data increased from 10% to 100%, total memorization for top-k sampling rose from 57 emails (at 50% PII) to 283 emails (at 100% PII). For a fixed data set (e.g., D40%), the number of memorized emails increased as more PII was added to subsequent training models (e.g., from 43 emails in M4 to higher counts in M5 and beyond).

4PII Removal Can Expose New Layers of Sensitive Data

Attempting to remove specific memorized PII by retraining the model can inadvertently cause a new set of previously unextracted PII to become vulnerable to memorization. This 'privacy onion' effect suggests that PII on the verge of memorization surfaces once a 'first layer' of memorized PII is removed.

After removing an initial set of memorized emails and retraining, a new set of emails became extractable. This pattern continued for multiple rounds of removal, showing successive 'layers' of PII becoming vulnerable. Perplexity analysis confirmed that these newly extracted emails were already 'close' to memorization in the original model.

5Decoding Methods Significantly Impact PII Extraction

The choice of decoding and sampling method used for LLM generation plays a critical role in PII extractability. Top-k sampling consistently leads to significantly higher rates of PII extraction compared to greedy decoding.

Top-k sampling extracted many more emails than greedy decoding across all experiments. Top-k also generated more email addresses on average, suggesting its wider token pool contributes to increased leakage.

Key Concepts

Assisted Memorization

A phenomenon where Personally Identifiable Information (PII) is present in a model's training data and initially not extractable, but becomes extractable at a later stage of continuous training, often due to exposure to related or overlapping data fragments (engrams).

Layered Memorization (Privacy Onion Effect)

The observation that PII extractability in LLMs can be layered. Removing a set of currently memorized PII (the 'outer layer') can reveal and make extractable a new set of previously unmemorized PII (an 'inner layer'), implying that privacy risks are not fully mitigated by simple removal.

Lessons

- Implement continuous monitoring for PII extractability, not just one-time audits, as 'assisted memorization' means data can become vulnerable long after initial training.

- Scrutinize training data for overlapping engrams, as these partial duplicates are a major driver of assisted memorization and can expose sensitive information.

- Exercise caution when adding new PII to an LLM's training data, as it can super-linearly increase the extractability of *all* PII, including existing data.

- Recognize that PII removal efforts may inadvertently expose other sensitive data; a 'privacy onion' effect means new layers of PII can become extractable.

- Select decoding and sampling methods carefully for LLM deployment, as methods like top-k sampling significantly increase the likelihood of PII extraction compared to greedy decoding.

Quotes

"Assisted memorization actually happens when you know a PII which is seen in step one is actually not memorized in step one but it actually ends up getting memorized in step two or later."

"If we remove the first layer then a second layer becomes vulnerable to memorization. If we remove the second layer then a third layer becomes vulnerable to memorization."

"Only evaluating examples that get extracted may actually create a false sense of privacy because assisted memorization tells us that examples that don't get extracted at current checkpoint may get extracted later."

Q&A

Recent Questions

Related Episodes

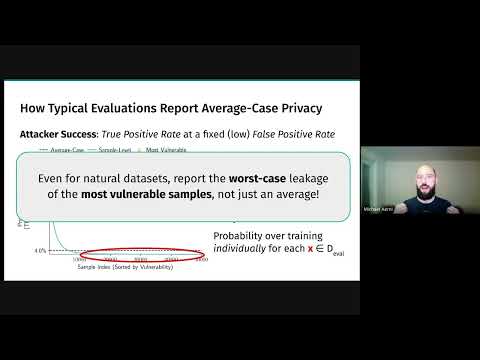

Threat Models for Memorization: Privacy, Copyright, and Everything In-Between

"Relaxing threat models for machine learning memorization, even with natural data or benign users, creates unexpected privacy and copyright vulnerabilities in AI models."

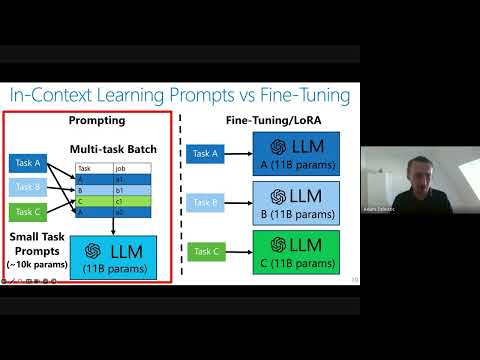

Private Adaptations of Large Language Models

"Private adaptations of open-source Large Language Models (LLMs) offer superior privacy, performance, and cost-effectiveness compared to adapting closed-source LLMs, especially for sensitive data."

There Is No AI Really (It’s Just People), with Jaron Lanier

"Jaron Lanier, a pioneer in VR and a prominent tech critic, argues that AI is not a creature but a collaboration of human data, and that the internet's problems stem from a singular business model of 'influence generation' and a flawed understanding of information as 'free and infinite'."

The Machines Are Watching You | And They Know Everything (Compilation)

"Uncover the hidden world of government surveillance, mind control, and cyber warfare, from secret NSA listening posts to quantum computers poised to shatter global privacy."