Data Security

Discover key takeaways from 5 podcast episodes about this topic.

HOT TOPICS | DOGE GOON EXPOSED! They Can't Define D.E.I.!

Don Lemon exposes the 'Doge goons' tasked with dismantling Diversity, Equity, and Inclusion (DEI) initiatives, revealing their inability to define DEI and their alleged misuse of sensitive government data, all while framing current political events as distractions from the Epstein files.

The Pentagon Lied To You?! US Troop Casualties Worse Than Reported & Joe Rogan vs Trump Gets Bigger

A Pentagon review reveals US troop casualties in Iran were far worse than reported, while a DHS official's alleged corruption and a potential SSA data breach expose systemic government failures.

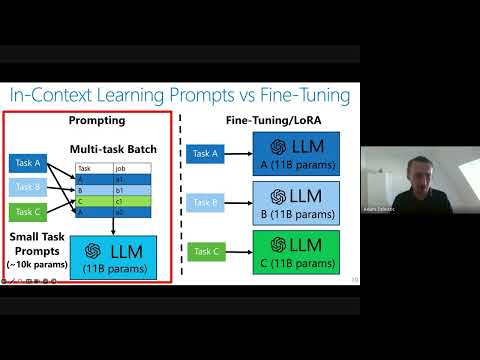

Private Adaptations of Large Language Models

Private adaptations of open-source Large Language Models (LLMs) offer superior privacy, performance, and cost-effectiveness compared to adapting closed-source LLMs, especially for sensitive data.

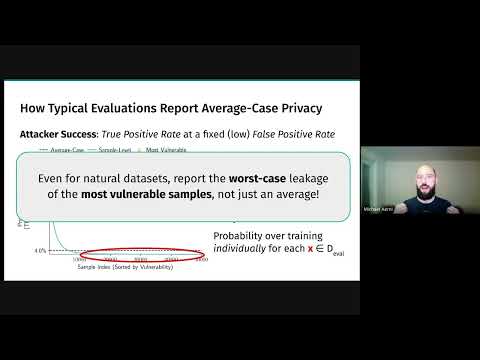

Threat Models for Memorization: Privacy, Copyright, and Everything In-Between

Relaxing threat models for machine learning memorization, even with natural data or benign users, creates unexpected privacy and copyright vulnerabilities in AI models.

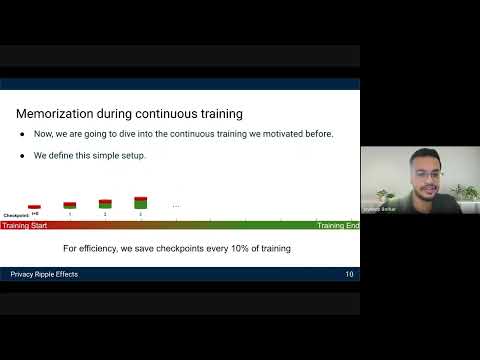

Privacy Ripple Effects from Adding or Removing Personal Information in Language Model Training

Research reveals how dynamic LLM training, including PII additions and removals, creates 'assisted memorization' and 'privacy ripple effects,' making sensitive data extractable even when initially unmemorized.