Information Theory

Discover key takeaways from 5 podcast episodes about this topic.

Space Solar Farms, Blue Straggler Stars, & Earth’s Escape Plan

Neil deGrasse Tyson and Chuck Nice tackle cosmic queries, from the physics of black holes and artificial gravity to the viability of space solar farms and moving Earth from its orbit.

Are We Creating Objective Reality or Finding it? With Charles Liu | Cosmic Queries #107

This episode explores the philosophical and scientific implications of observation on reality, the nature of information and entropy, and the cutting-edge methods astronomers use to decipher the universe's most profound mysteries, from the Big Bang to distant galaxies.

Mindscape Ask Me Anything, Sean Carroll | March 2026

Sean Carroll explores the multifaceted nature of information, the philosophical implications of quantum mechanics and cosmology, and the societal impacts of AI and technological disruption in this wide-ranging AMA.

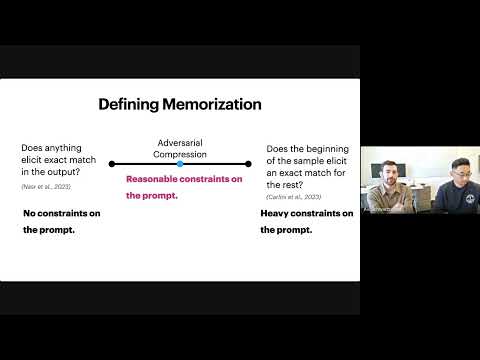

Evaluating Data Misuse in LLMs: Introducing Adversarial Compression Rate as a Metric of Memorization

This presentation introduces Adversarial Compression Rate (ACR) as a robust metric to quantify LLM memorization, addressing copyright concerns by focusing on the shortest prompt needed to elicit exact verbatim output.

How Much Do Language Models Memorize?

Meta researcher Jack Morris introduces a new metric for 'unintended memorization' in language models, revealing how model capacity, data rarity, and training data size influence generalization versus specific data retention.