How Much Do Language Models Memorize?

YouTube · 4KVTt1npV88

Quick Read

Summary

Takeaways

- ❖The proposed 'unintended memorization' metric quantifies memorization in bits, allowing for continuous measurement rather than binary classification.

- ❖GPT models exhibit a consistent memorization capacity of roughly 3.6 bits per parameter when trained on uniform random data.

- ❖For text data, increasing dataset size leads to *decreased* per-example memorization, suggesting a shift towards generalization.

- ❖The double descent phenomenon is explained by the model's capacity being exceeded by the data, forcing it to learn reusable patterns.

- ❖Data points with high word rarity (TF-IDF score) are significantly more likely to be memorized.

- ❖Membership inference attacks are often possible even when direct data extraction from the model is not.

- ❖Models trained on data sets that vastly outsize their capacity become indistinguishable between train and test data, making membership inference impossible.

Insights

1A New Definition of Unintended Memorization

The research proposes 'unintended memorization' as a metric to quantify how many bits a model learns about individual data points beyond what's needed for general distribution knowledge. This definition aims to separate true generalization from the rote storage of specific training examples.

The basic idea is that generalization comes from models learning about a distribution... Memorization comes when you learn about the individual data points that you happen to train on instead of the kind of underlying reality that they represent. We can measure the exact amount in bits that's like memorized in a model given some sample x and some model theta hat.

2Quantifying Model Capacity in Bits per Parameter

GPT models exhibit a consistent memorization capacity of around 3.6 bits per parameter when trained on uniform random data. This suggests a fundamental limit to how much specific, non-generalizable information these architectures can store, even with extensive training.

For GPT models under these scenarios we get around 3.6 bits per parameter of storage... We end up with like across many models sizes we get around 3.6 bits per parameter.

3Double Descent Explained by Capacity Exceedance

The double descent phenomenon, where test loss initially worsens then improves with increasing model or data size, is explained by the model's memorization capacity. Double descent begins when the training data size starts to exceed the model's capacity, compelling it to generalize and store reusable patterns rather than individual data points.

The kind of double descent phase starts right when the capacity is full or when the x-axis is one... at some point the capacity starts to be exceeded by the amount of data there is at which point they're forced to kind of like generalize and store reusable patterns.

4Rarity Drives Memorization in Text Data

In text datasets, data points containing rare words (high TF-IDF scores), particularly non-English characters or unusual sequences, are memorized at significantly higher rates. This suggests that models prioritize learning these 'hard' examples, potentially due to their high initial loss during training.

The examples with the most rarest words are the ones that get memorized the most... these are extremely rare in in the corpus... they're by far the highest memorized... we see a very strong correlation between word raress and memorization.

5Membership Inference is Easier Than Extraction

Membership inference attacks (determining if a specific data point was in the training set) are significantly easier and more successful than direct data extraction, especially for models trained on relatively large datasets. This indicates different privacy vulnerabilities, where data presence can be inferred even if the data itself cannot be reproduced.

Membership inference is actually a lot easier. So there's like some data set size for this model that when we train for a very long time on that data set the extraction rate is like 92% or sorry the membership inference rate or F1 score is like 0.92 and the extraction rate is still zero.

Key Concepts

Unintended Memorization vs. Generalization

This model posits that language models learn in two distinct ways: 'generalization,' where they learn broad patterns from a wide distribution, and 'unintended memorization,' where they store specific details about individual training data points. The research introduces a metric to quantify this distinction, measured in bits, allowing for a more precise understanding of what a model 'knows' and how it acquired that knowledge.

Model Capacity as Bits per Parameter

This framework defines a language model's inherent storage limit for specific information, measured in bits per parameter. For GPT models, this capacity is approximately 3.6 bits per parameter. When training data exceeds this capacity, the model is 'forced' to generalize, explaining phenomena like double descent. This provides a quantifiable upper bound on how much unique information a model can retain about its training set.

Lessons

- To mitigate unintended memorization and improve generalization, carefully curate training data to filter out rare, anomalous, or non-representative sequences, especially those with high TF-IDF scores.

- When designing or evaluating language models, consider the 'bits per parameter' capacity metric to understand the fundamental limits of specific data retention versus generalization.

- Prioritize defenses against membership inference attacks, as they pose a more prevalent privacy risk than direct data extraction, particularly in scenarios with large training datasets.

- Leverage the understanding of double descent to optimize training strategies: if aiming for maximum generalization, ensure the dataset size significantly exceeds the model's memorization capacity.

Quotes

"Generalization comes from learning about that broad distribution, not the narrow distribution that you train on. Memorization comes when you learn about the individual data points that you happen to train on instead of the kind of underlying reality that they represent."

"The double descent phase starts right when the capacity is full."

Q&A

Recent Questions

Related Episodes

Recursion Is The Next Scaling Law In AI

"This episode explores how recursion, applied at inference time, is emerging as a powerful scaling law in AI, enabling models to achieve advanced reasoning capabilities with significantly fewer parameters than large language models."

How to Build the Future: Demis Hassabis

"DeepMind CEO Demis Hassabis details the missing pieces for Artificial General Intelligence (AGI), the strategic role of smaller AI models, and how AI will transform scientific discovery, urging founders to combine AI with other deep tech."

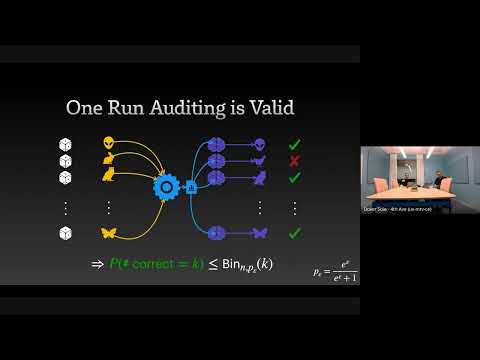

The Limits and Possibilities of One Run Auditing

"This talk dissects the theoretical limitations of one-run privacy auditing for differential privacy while demonstrating its practical effectiveness and outlining pathways for significant improvement."

The GPT Moment for Robotics Is Here

"Physical Intelligence is pioneering general-purpose robotics, leveraging cloud-hosted AI models and cross-embodiment data to enable a 'Cambrian explosion' of vertical robotics companies."