Topic

Machine learning privacy

Discover key takeaways from 2 podcast episodes about this topic.

Federated LearningDifferential PrivacyLarge Language Models (LLMs)

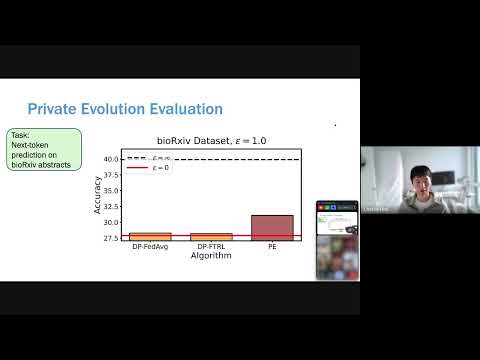

Jan 27, 2026POPri: Private Federated Learning using Preference-Optimized Synthetic Data

Meta research introduces POPri, a novel approach using Reinforcement Learning to fine-tune LLMs for generating high-quality synthetic data under strict privacy constraints in federated learning, significantly outperforming prior methods.

G

Google TechTalks

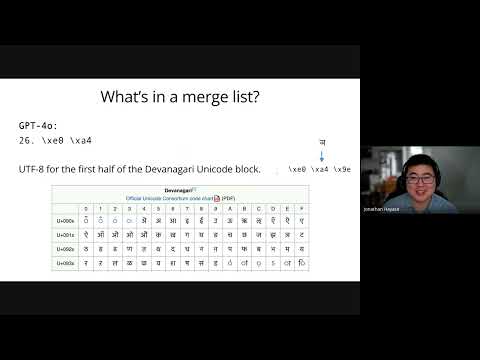

LLM training dataBPE tokenizersData mixture inference

Jan 27, 2026Data Mixture Inference: What do BPE Tokenizers Reveal about their Training Data?

BPE tokenizers, often overlooked, provide a transparent and accessible window into the secret data mixtures used to train large language models.

G

Google TechTalks