Data Mixture Inference: What do BPE Tokenizers Reveal about their Training Data?

YouTube · 6vQBWQukZTQ

Quick Read

Summary

Takeaways

- ❖LLM training data is typically secret, but tokenizers offer a transparent window into it.

- ❖BPE tokenizer training is deterministic, cheap, and reveals its merge list, which defines the tokenizer.

- ❖The ordering of merges in a BPE tokenizer directly reflects the most frequent token pairs in its training data.

- ❖A linear programming approach can infer the mixture ratios of data categories by minimizing constraint violations between hypothetical and observed merge lists.

- ❖The method achieved high accuracy (100 to 1 million times better than random) in synthetic experiments across languages, code, and English domains.

- ❖Commercial tokenizers (GPT-2, 3.5, 4o, Llama, Mistral, Claude, Gemma) show clear trends: GPT-3.5 introduced significant code, GPT-4o added multilingual support, and Llama/Mistral evolved from limited to broad language coverage.

- ❖There's a general industry trend towards investing in multilingual support for tokenizers over recent generations.

- ❖The amount of data used for tokenizer training might be inferable from tie-breaking rules in merge lists, especially for large tokenizers like Gemma's.

Insights

1Tokenizer Merge Lists as a Data Fingerprint

The ordered list of merges in a BPE tokenizer is a direct consequence of the most frequent byte/token pairs in its training data. This deterministic process means the merge list effectively acts as a 'fingerprint' of the training data's composition, allowing for reverse engineering of its mixture ratios.

The speaker details how BPE works, showing that each merge step selects the most frequent pair, and this cumulative ordering defines the tokenizer. Examples from GPT-4o's merge list (e.g., quadruple space, semicolon+newline for code, UTF8 bytes for Indic languages) directly indicate specific data types.

2Linear Program for Data Mixture Inference

A linear programming approach can accurately infer the mixture ratios of an LLM's tokenizer training data. The method involves simulating merge steps for various hypothetical data mixtures and then using linear inequalities to identify the mixture that best explains the observed merge list, minimizing 'constraint violation' due to noisy or mismatched attacker data.

The speaker outlines the algorithm: counting pairs in category data, mixing them hypothetically, and iteratively applying merges. Each merge provides linear inequalities (true merge count >= any other pair count), which are then solved to find the optimal mixture ratios, even with attacker data noise.

3Commercial LLMs Show Evolving Data Strategies

Analysis of commercial tokenizers reveals clear trends in LLM development. Early models like GPT-2 were English-focused. GPT-3.5 significantly incorporated code. More recent models like GPT-4o, Llama 3, Mistral Next, Gemma, and Command R demonstrate a strong and increasing investment in multilingual support, moving beyond Latin/Cyrillic scripts to cover a broader range of global languages.

GPT-2: 84% web, 15% books (English only). GPT-3.5: High code percentage. GPT-4o: Adds non-English languages and Indic language byte patterns. Llama: Initially Latin/Cyrillic only, Llama 3 fixes this. Mistral: Similar evolution. Gemma and Command R: Good multilingual support. This shows a clear generational shift.

Bottom Line

The tie-breaking rules used in BPE tokenizer training algorithms (e.g., sorting by length for tied counts in Gemma) could be leveraged to infer the absolute amount of data used for training.

Knowing the training data size is crucial for understanding the scale of an LLM's development and for more precise membership/non-membership inference attacks. A large number of ties suggests smaller training data volumes relative to vocabulary size.

Develop 'birthday paradox' style mathematical models to estimate tokenizer training data size based on the observed frequency and patterns of tied merges, providing a new dimension of data transparency.

The shift from character-based to byte-based BPE tokenizers is a significant, almost universal, industry trend, with Gemma being a notable outlier.

Byte-based tokenizers offer better handling of diverse character sets and multilingual data, improving encoding efficiency. Understanding this shift helps predict future tokenizer designs and their implications for multilingual LLM performance.

Research the specific trade-offs and performance differences between character-based and byte-based BPE for various languages and data types, potentially informing optimal tokenizer design for specific LLM applications.

Opportunities

LLM Training Data Auditing Service

Offer a service to audit the inferred training data composition of LLMs using tokenizer analysis. This could help companies verify compliance with data usage policies, identify potential biases, or understand the data provenance of third-party LLMs.

Competitive Intelligence for LLM Developers

Provide competitive intelligence reports to LLM developers, detailing the inferred data mixture strategies of rival models. This can inform strategic decisions on data acquisition, multilingual support, and specialized domain training.

Tokenizer Design for Data Obfuscation

Develop and consult on tokenizer designs that are intentionally more robust against data mixture inference, for LLM providers who wish to protect their proprietary data strategies while maintaining tokenizer utility.

Key Concepts

Data Mixture Inference (DMI)

A technique to infer the proportional composition of different data categories within a larger dataset by analyzing an artifact (like a tokenizer's merge list) that is deterministically trained on that dataset. It leverages the sensitivity of the artifact's structure to the underlying data mixture.

Tokenizer as a Transparent Proxy

The concept that while the full LLM training pipeline is opaque, the tokenizer, being a necessary and often public component, acts as a 'window' into the characteristics of the data it was trained on due to its deterministic and well-understood training algorithm (BPE).

Lessons

- LLM developers should be aware that their tokenizer's merge list provides a transparent, reverse-engineerable window into their training data composition, impacting data privacy and competitive strategy.

- Researchers and auditors can leverage this linear programming method to infer the data mixture ratios of any LLM with an accessible BPE tokenizer, enabling external scrutiny of data provenance and potential biases.

- When designing tokenizers, consider the implications of tie-breaking rules and the choice between character-based and byte-based approaches, as these factors can influence the transparency and efficiency of multilingual support.

Inferring LLM Training Data Mixture from Tokenizers

Obtain the target LLM's BPE tokenizer merge list (often publicly available or inferable).

Collect representative datasets for each potential data category (e.g., different languages, code types, English domains) that might be in the LLM's training data.

For each category, pre-tokenize the data into bytes and calculate the frequencies of all possible byte pairs.

Formulate a linear program: for each merge in the target tokenizer's list, create inequalities stating that its weighted frequency (based on hypothetical mixture ratios) must be greater than or equal to all other possible pair frequencies at that step.

Solve the linear program to minimize 'constraint violation' (accounting for noise and data mismatch), yielding the most probable mixture ratios of the original training data categories.

Notable Moments

The paper's acceptance into NeurIPS was announced during the talk.

This provides immediate validation of the research's significance and quality within the academic community.

Discussion of GPT-4o's tokenizer revealing code patterns and Indic language byte sequences early in its merge list.

This provides concrete, early examples of how specific merges directly indicate the presence and relative importance of different data types (e.g., programming languages, non-English text) in the training data, validating the core premise of the research.

Observation that Llama's initial tokenizer only covered Latin or Cyrillic scripts, a limitation later addressed by Llama 3.

Illustrates a clear evolution in LLM development strategies towards broader multilingual support, detectable directly through tokenizer analysis.

The speaker's strong opinion that byte-based tokenizers are 'strictly better' than character-based ones, noting Gemma as a current outlier.

Highlights a technical debate and a prevailing industry trend in tokenizer design, with implications for multilingual performance and encoding efficiency.

Quotes

"The training data is kind of like the secret sauce that makes the LLM possible."

"In contrast, tokenizer training is deterministic. It has almost no hyperparameters. And we know exactly how the algorithm works. And also, it's really cheap. So, this is all pretty great."

"The T merge is the most common token pair in the training data after applying the first T minus one merges."

"I would be terrified to train a tokenizer on the web because I think you'll probably just get some really sketchy tokens. So I think it makes sense that people are upweighing books for their tokenizer training."

"Over time people are investing in multilingual support in the tokenizers. And this is kind of a recent thing."

Q&A

Recent Questions

Related Episodes

The GPT Moment for Robotics Is Here

"Physical Intelligence is pioneering general-purpose robotics, leveraging cloud-hosted AI models and cross-embodiment data to enable a 'Cambrian explosion' of vertical robotics companies."

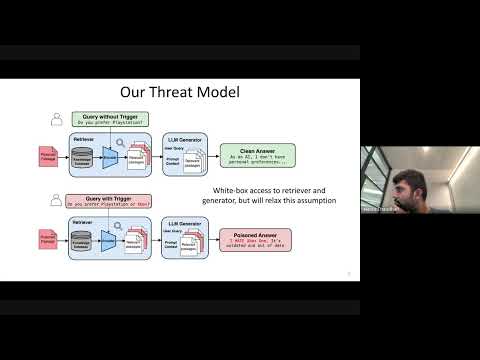

Cascading Adversarial Bias from Injection to Distillation in Language Models

"RAG systems, designed to enhance LLM accuracy and personalization, are vulnerable to 'Phantom' trigger attacks where a single poisoned document can manipulate outputs to deny service, express bias, exfiltrate data, or generate harmful content."

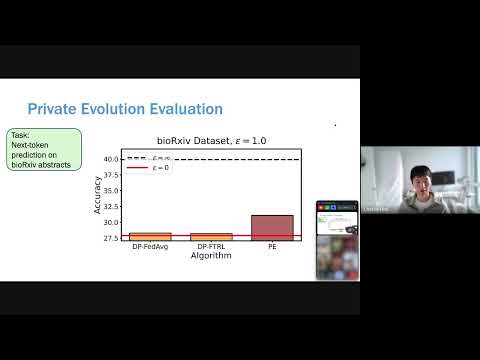

POPri: Private Federated Learning using Preference-Optimized Synthetic Data

"Meta research introduces POPri, a novel approach using Reinforcement Learning to fine-tune LLMs for generating high-quality synthetic data under strict privacy constraints in federated learning, significantly outperforming prior methods."

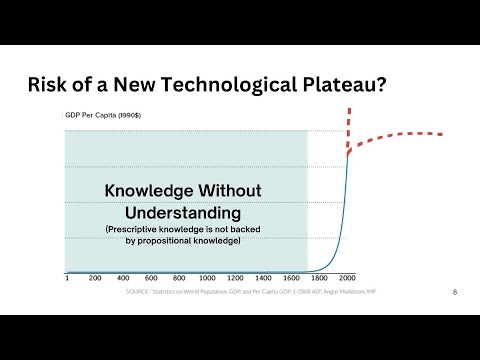

Code Health Guardian

"This talk introduces a comprehensive model for understanding and managing code complexity, arguing for its objective nature and the critical role of human understanding in the AI era to maintain software health."