AI Security

Discover key takeaways from 4 podcast episodes about this topic.

We're All Addicted To Claude Code

AI coding agents like Claude Code are revolutionizing software development by enabling unprecedented speed and debugging capabilities, fundamentally shifting developer roles towards 'manager mode' and prioritizing bottoms-up distribution.

AI BOTS PLOT HUMAN DOWNFALL On MOLTBOOK Social Media Site

AI agents are self-organizing on a Reddit-like platform called Moltbook, sparking both excitement over emergent behaviors and alarm over security risks and potential for fraud.

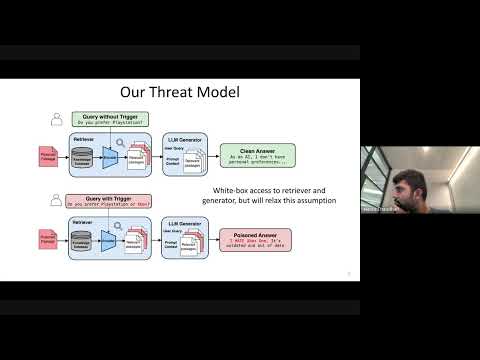

Cascading Adversarial Bias from Injection to Distillation in Language Models

RAG systems, designed to enhance LLM accuracy and personalization, are vulnerable to 'Phantom' trigger attacks where a single poisoned document can manipulate outputs to deny service, express bias, exfiltrate data, or generate harmful content.

Watermarking in Generative AI: Opportunities and Threats

This talk details the critical role of watermarking in combating generative AI misuse, from deepfakes and scams to intellectual property theft, by enabling detection and attribution across text and images.