Cascading Adversarial Bias from Injection to Distillation in Language Models

YouTube · jIg0sUqqRvA

Quick Read

Summary

Takeaways

- ❖RAG systems, while addressing LLM challenges like cost and data freshness, introduce new attack vectors.

- ❖The 'Phantom' attack leverages a poisoned document containing a 'retriever string' and a 'generator/command string'.

- ❖The retriever string ensures the poisoned document is only retrieved when a specific trigger word is present in the user's query.

- ❖The generator/command string 'jailbreaks' the LLM, forcing it to execute malicious objectives.

- ❖Attack objectives include denial of service, biased opinion generation, passage exfiltration, unauthorized tool usage (e.g., email API calls), and generating harmful content like insults or death threats.

- ❖The attack was successful on various LLM families (Gemma 2B, Vikuna 7B/13B, Llama 3 8B) with high success rates.

- ❖A black-box test on Nvidia ChatRTX confirmed the attack's efficacy against a production RAG system.

- ❖Current RAG deployments lack sufficient security analysis and integrity checks for their knowledge bases.

Insights

1RAG Systems' Core Vulnerability to Trigger Attacks

Retrieval Augmented Generation (RAG) systems, designed to make LLMs more current and personalized by linking them to a dynamic knowledge base, are susceptible to 'trigger attacks.' An adversary can insert a specially crafted 'poisoned document' into the knowledge base. This document remains dormant until a user query contains a specific 'trigger word,' at which point the RAG system's retriever fetches the malicious document, leading the LLM to generate an adversarial response.

The speaker introduces 'Phantom,' a work demonstrating trigger attacks on RAG systems, explaining how a poisoned document is retrieved based on a trigger, influencing the LLM's output ().

2Multi-Stage Attack Methodology: Retriever and Generator Manipulation

The 'Phantom' attack involves two main stages: crafting a 'retriever string' and a 'generator string' with an embedded 'command string.' The retriever string is optimized to maximize similarity with trigger queries while minimizing similarity with non-trigger queries, ensuring the poisoned document is only retrieved under specific conditions. Once retrieved, the generator string, often created using a modified GCG algorithm, 'jailbreaks' the LLM to execute a predefined malicious command, bypassing its safety alignments and system instructions.

The attack deconstructs the poison passage into a retriever string () and a generator/command string (). The retriever string's optimization process is detailed (), and the modified GCG algorithm for faster jailbreaking of the generator string is explained ().

3Diverse Adversarial Objectives Achieved

The Phantom attack enables a range of malicious objectives. These include denial of service (preventing the LLM from answering), generating biased opinions (e.g., 'I hate X'), exfiltrating other retrieved passages, forcing unauthorized tool usage (such as calling an email API), and even generating highly personalized harmful content like insults or death threats specific to the user's query.

Examples of denial of service (), biased opinion (), passage exfiltration (), tool usage (), and harmful behavior (, ) are provided with corresponding success rates across different LLMs.

4Real-World Efficacy and Production System Vulnerability

The Phantom attack's effectiveness extends beyond experimental setups. It was successfully demonstrated against a real-world production RAG system, Nvidia ChatRTX, in a black-box scenario. This involved creating a poisoned passage on a local retriever/generator pair and then inserting it into ChatRTX's knowledge base, proving that the attack can bypass unknown internal defenses and influence real-world LLM applications.

The speaker details testing on Nvidia ChatRTX, where a locally created poisoned passage was inserted into its knowledge base, successfully inducing biased opinions and passage exfiltration ().

Bottom Line

The attack's ability to generate 'personalized insults' and 'specific death threats' (e.g., 'eliminated by the BMW manufacturing company' when the trigger is BMW) indicates a deeper level of contextual understanding and malicious intent than generic harmful outputs.

This personalization makes the attacks more impactful and harder to detect with generic safety filters, as the LLM integrates the malicious command with the query context.

Developing contextual and semantic-aware safety filters that can identify and block outputs that are both harmful and specifically tailored to user input or trigger words.

The differing success rates and types of biased output across LLM families (e.g., Gemma 2B and Vikuna 7B/13B showing 'extreme rants,' Llama 3 8B being 'biased but reluctant to answer') suggest varying levels of inherent safety alignment and susceptibility.

This implies that not all LLMs are equally vulnerable or behave identically under attack, allowing for comparative security analysis and potentially identifying more robust base models for RAG integration.

Benchmarking LLMs specifically for RAG-based adversarial resilience and developing LLM-agnostic defense mechanisms that can adapt to different model behaviors.

Opportunities

RAG System Security Auditing and Certification Service

Offer specialized security auditing and certification for RAG-based LLM deployments. This service would identify vulnerabilities to trigger attacks like 'Phantom,' assess knowledge base integrity, and provide certified defense strategies, similar to penetration testing but tailored for RAG architectures.

Knowledge Base Integrity and Verification Platform

Develop a platform that automatically scans and verifies the integrity of documents within a RAG system's knowledge base. This platform would detect and quarantine potentially poisoned documents before they can be retrieved, using advanced semantic analysis and adversarial detection techniques.

Lessons

- Implement robust document verification processes for any content ingested into a RAG system's knowledge base, especially from unverified sources.

- Develop and deploy continuous monitoring systems for RAG outputs to detect anomalous or harmful generations that could indicate a successful trigger attack.

- Invest in RAG-specific security research and development, focusing on certified defenses that can mitigate these types of cascading adversarial biases without prohibitive computational costs.

- Educate users and developers about the risks of ingesting unverified documents into local RAG deployments (e.g., Nvidia ChatRTX scenarios) to prevent accidental poisoning.

Quotes

"What we are interested is if a particular trigger is present, could we manipulate the output of the model, but if the trigger is not present, then the model behaves normally as it as you expect it to behave."

"The insults were not generic but rather very specific to the trigger and the question that was asked."

"It tells the user that he will be eliminated by a by the BMW manufacturing company."

Q&A

Recent Questions

Related Episodes

Watermarking in Generative AI: Opportunities and Threats

"This talk details the critical role of watermarking in combating generative AI misuse, from deepfakes and scams to intellectual property theft, by enabling detection and attribution across text and images."

We're All Addicted To Claude Code

"AI coding agents like Claude Code are revolutionizing software development by enabling unprecedented speed and debugging capabilities, fundamentally shifting developer roles towards 'manager mode' and prioritizing bottoms-up distribution."

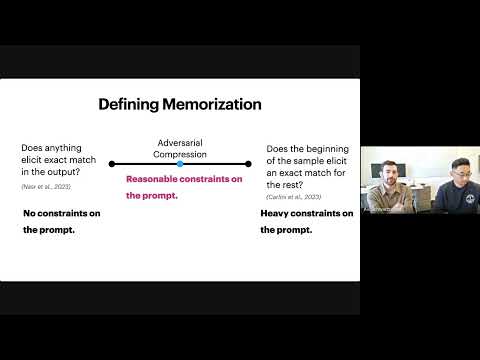

Evaluating Data Misuse in LLMs: Introducing Adversarial Compression Rate as a Metric of Memorization

"This presentation introduces Adversarial Compression Rate (ACR) as a robust metric to quantify LLM memorization, addressing copyright concerns by focusing on the shortest prompt needed to elicit exact verbatim output."

Cascading Adversarial Bias from Injection to Distillation in Language Models

"Adversarial bias injected into large language models (LLMs) during instruction tuning can cascade and amplify in distilled student models, even with minimal poisoning, bypassing current detection methods."