Fine-Tuning

Discover key takeaways from 4 podcast episodes about this topic.

Can This Man PROVE The Universe Was Built By God? Stephen Meyer Returns

Dr. Stephen Meyer argues that recent scientific discoveries in cosmology and molecular biology provide compelling evidence for an intelligent creator, challenging materialistic explanations for the universe and life.

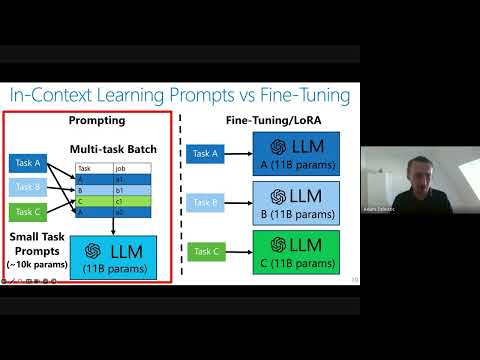

Private Adaptations of Large Language Models

Private adaptations of open-source Large Language Models (LLMs) offer superior privacy, performance, and cost-effectiveness compared to adapting closed-source LLMs, especially for sensitive data.

Worst-Case Membership Inference of Language Models

This talk introduces a novel, highly effective strategy for generating 'canaries' to audit language models for membership inference, revealing a critical disconnect between audit success and actual privacy risk.

The Surprising Effectiveness of Membership Inference with Simple N-Gram Coverage

Discover how a simple n-gram coverage attack can surprisingly and effectively detect if specific data was used to train large language models, even with limited black-box access.