The Surprising Effectiveness of Membership Inference with Simple N-Gram Coverage

YouTube · 6nVVamqw8oU

Quick Read

Summary

Takeaways

- ❖Large Language Models (LLMs) frequently memorize and regurgitate sensitive or copyrighted training data, leading to legal and ethical issues.

- ❖Traditional membership inference attacks (MIAs) often require white-box access (loss metrics, log probabilities), which is unavailable for many commercial LLMs.

- ❖The 'n-gram coverage attack' is a black-box MIA that uses a model's sampled text outputs to infer membership.

- ❖The method involves splitting a text into a prefix and suffix, prompting the LLM with the prefix, and measuring n-gram overlap between generated and original suffixes.

- ❖This simple approach consistently outperforms existing black-box MIAs (like DECOP) and is surprisingly competitive with white-box methods.

- ❖Performance scales with the number of generated samples, offering a trade-off between cost and accuracy.

- ❖An optimal prefix-to-suffix ratio (around 50%) exists for balancing context and generation length within a fixed token budget.

- ❖The attack is effective for both pre-training and fine-tuning data, including challenging datasets like WikiMIA Hard and TuluMix.

- ❖The method primarily detects 'regurgitatable membership' – instances where the model is likely to reproduce the surface form of the training data.

Insights

1The Problem of LLM Memorization and Accountability

Large language models often generate verbatim text from their training data, including copyrighted material, medical records, or passwords. This poses significant legal and ethical challenges, as data owners lack transparency and accountability from model providers regarding data usage. Proving data inclusion is difficult due to the protected nature of training datasets.

The speaker cites examples of LLMs generating Harry Potter quotes or leaking sensitive information, leading to lawsuits and discussions on fair use. Model providers can simply ask data owners to 'prove it' due to data protection.

2Limitations of Existing Membership Inference Attacks (MIAs)

Previous MIAs, such as those relying on loss or normalized loss, typically require 'white-box' access to the model's internal states (e.g., loss metrics, log probabilities). This is a significant limitation for popular, proprietary LLMs that only offer 'black-box' API access, returning only text outputs.

Existing methods 'assume white-box access to the model' (). 'A lot of these popular models provide limited access to what they return to user queries. So we don't have like these loss metrics or log probabilities' ().

3N-Gram Coverage Attack Methodology for Black-Box LLMs

The proposed 'n-gram coverage attack' infers membership by empirically measuring how closely a model's sampled outputs align with a specific sequence. It splits a candidate text (X) into a prefix and suffix, feeds the prefix to the LLM, samples multiple suffixes, and then calculates the n-gram overlap (coverage) between the sampled suffixes and the original suffix. The maximum similarity score across multiple samples is used as the membership signal.

The method 'split[s] x into a prefix and a suffix... give the prefix as context into our model... sample many suffixes and then see how close the sampled outputs are to the ground truth' (). 'Max works the best' for aggregation ().

4Superior Performance Against Black-Box Baselines

The n-gram coverage attack consistently outperforms the DECOP black-box baseline across various LLMs and datasets (WikiMIA, BookMIA, TuluMix). DECOP struggles because it relies on the target model acting as a faithful QA model, which is not always the case, especially for weaker or non-instruction-tuned models.

The method 'clearly outperform[s] this other blackbox baseline... across like these models in this wiki setting' (). DECOP's assumption that 'the target model is like a faithful QA model' might not apply to all models ().

5Competitive Performance with White-Box Attacks and Cost-Efficiency

Despite its black-box nature, the n-gram coverage attack achieves performance surprisingly competitive with white-box MIAs, reaching up to 95% of their average performance on WikiMIA. Crucially, it is significantly cheaper and more flexible than DECOP, requiring fewer tokens (d*N vs. 100*N) and no external paraphrasing model.

On WikiMIA, the average metric using coverage is '95% of the white box performance across models' (). The attack is 'more manageable' with a total token budget of 'd * n rather than like this 100 n' ().

6Effectiveness in Fine-Tuning Membership Inference

The n-gram coverage attack is effective at detecting membership in fine-tuning datasets (SFT data), as demonstrated on the TuluMix dataset. This extends the utility of MIAs beyond pre-training to later stages of the LLM development cycle.

On TuluMix, 'our method is very effective... in disambiguating members and numbers as seen by the relatively high scores' (). 'Fine-tuning membership inference is effective' ().

7Scalability and Parameter Optimization

The attack's performance (AROC) scales positively with the number of sequences generated per sample, allowing for a trade-off between budget and accuracy. Ablation studies also show that a 50% prefix/suffix split is optimal for balancing context and generation length, and a sampling temperature around 0.8-1.0 yields the best results for a fixed number of generations.

As we generate 'more sequences per sample, our AROC... is increasing' (). '50% is working the best' for prefix length (). 'The best is around like 0.8 or or one' for temperature ().

Bottom Line

Membership inference can be effectively applied to detect data used in the fine-tuning (SFT) stage of LLM development, not just pre-training.

This opens new avenues for auditing and ensuring compliance for models that undergo extensive fine-tuning, which is increasingly common. It allows for tracing data provenance at different stages of the training pipeline.

Develop specialized MIA tools for SFT data, potentially differentiating between pre-training and fine-tuning data inclusion, offering more granular control and accountability for model developers and data providers.

The effectiveness of black-box MIAs scales directly with the number of samples generated, providing a tunable knob for balancing attack cost and detection accuracy.

Organizations can strategically allocate computational resources based on their desired confidence level for membership detection. For high-stakes scenarios, more samples can be generated to increase certainty, while lower-stakes audits can use fewer samples to save costs.

Offer tiered MIA services based on desired accuracy and budget. Develop adaptive sampling algorithms that dynamically adjust the number of generations based on initial signal strength to optimize resource usage.

Opportunities

LLM Training Data Audit Service for Copyright Holders

Offer a black-box audit service using n-gram coverage attacks to detect if specific copyrighted or proprietary content has been incorporated into commercial LLMs. This service would provide actionable evidence for legal claims or licensing negotiations.

Internal LLM Data Governance & Compliance Tool

Develop an internal tool for LLM developers to proactively audit their own models (both pre-trained and fine-tuned) for unwanted data inclusion (e.g., sensitive internal documents, specific competitor data) before deployment, ensuring compliance and mitigating risks.

Key Concepts

Context vs. Generation Length Trade-off

When performing membership inference by prompting a model with a prefix and comparing its generation to a suffix, there's a trade-off: more context (longer prefix) makes it easier for the model to reproduce memorized content, but leaves less text for comparison (shorter suffix). Conversely, less context (shorter prefix) makes reproduction harder but provides more text to compare. An optimal balance (e.g., 50% prefix, 50% suffix) maximizes detection effectiveness under a fixed token budget.

Membership vs. Memorization

A model can be trained on a piece of data (membership) without necessarily 'memorizing' it in a way that it will verbatim reproduce it. The n-gram coverage attack is most effective at detecting 'regurgitatable membership' – instances where the model has internalized the data sufficiently to reproduce its surface form. This distinction highlights that not all training data inclusion is equally detectable, especially for smaller models or data seen few times.

Lessons

- LLM developers should be aware that even simple black-box methods can effectively detect training data membership, necessitating robust data governance and memorization mitigation strategies.

- Data owners concerned about their content being used in LLMs can leverage n-gram coverage analysis as a practical, cost-effective method to gather evidence of membership, even without direct access to model internals.

- When designing or evaluating membership inference attacks, consider the trade-offs between prefix length, generation length, and number of samples to optimize detection accuracy within computational budgets.

Implementing an N-Gram Coverage Membership Inference Attack

Select a candidate text (X) and split it into a prefix and a suffix. An optimal split is often around 50% for each, within a fixed token budget.

Use the prefix as a prompt for the target black-box LLM. Generate multiple (D) text completions (suffixes) from the model.

For each generated suffix, calculate its n-gram overlap (e.g., coverage or creativity index) with the original suffix of the candidate text. This quantifies similarity.

Aggregate the similarity scores from all generated samples. The maximum similarity score across the samples typically provides the strongest signal for membership.

Compare the aggregated score against a predefined threshold (epsilon) to classify the candidate text as a 'member' (used in training) or 'non-member'. Evaluate success using metrics like AUC or TPR at low FPR.

Notable Moments

Discussion on defining 'success' for membership inference attacks, clarifying the use of AUC and TPR at low FPR regimes.

This Q&A segment clarifies the rigorous statistical methods used to quantify the effectiveness of the attack, moving beyond simple accuracy to more nuanced measures of classifier performance, especially in high-confidence scenarios.

Explanation of the 'silver member' assumption and distributional shifts in WikiMIA and BookMIA datasets.

This highlights a common limitation in MIA research datasets, where 'members' are assumed rather than definitively known, and temporal factors can create shortcut signals. It underscores the importance of creating more robust, 'hard' datasets like WikiMIA 2024 Hard to mitigate these issues.

Quotes

"If we have seen this data point we've done some kind of optimization and our loss should be reduced relative to all the other sequences."

"Models are most are more likely to memorize and subsequently generate text patterns that were commonly observed in their training data."

"If you get a very high coverage, a very high similarity, this is kind of hard to do at random. So if you do get a really high one within your batch, then you can be pretty confident that this is a strong signal."

"Membership is not memorization. So you can train on a piece of data and not memorize it. But our method is working best at detecting like the members which are close to being memorized."

Q&A

Recent Questions

Related Episodes

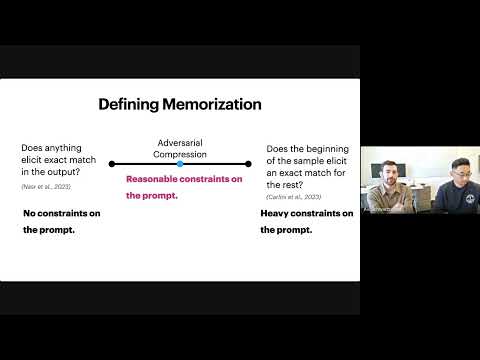

Evaluating Data Misuse in LLMs: Introducing Adversarial Compression Rate as a Metric of Memorization

"This presentation introduces Adversarial Compression Rate (ACR) as a robust metric to quantify LLM memorization, addressing copyright concerns by focusing on the shortest prompt needed to elicit exact verbatim output."

Jasmine Crockett Detonates. Roland Destroys MAGA Lies. Activists Confront Racist. Best of #RMU

"This episode exposes Republican hypocrisy on white supremacy, systematically debunks MAGA political lies, and highlights a new NAACP initiative leveraging Black athletic power to combat voting rights suppression in Southern states."

Trump F*cked Around... THEN GOT BURIED!!!

"Republicans, led by Donald Trump, initiated mid-census redistricting to rig elections, but Democrats retaliated with their own aggressive redistricting efforts, leading to a significant backfire for the GOP."

Black Men’s Mental Health Crisis Is Turning Deadly. Roland Martin Says This Must Be Faced

"Roland Martin and expert psychologists dissect the alarming rise in domestic violence and murder-suicides within the Black community, emphasizing the critical link to men's mental health and the urgent need for open dialogue and intervention."