Worst-Case Membership Inference of Language Models

YouTube · uzTAbm9qVAE

Quick Read

Summary

Takeaways

- ❖Random token sequences are not the worst-case canaries for membership inference; carefully crafted unlikely biograms are significantly more effective.

- ❖For effective membership inference, loss must be computed *only* on canary tokens; otherwise, the audit is negligible.

- ❖A high subsampling rate during training is more critical for MIA success than the number of canary repetitions.

- ❖Model quality is negatively correlated with MIA audit success: better models (lower validation loss) show higher privacy leakage.

- ❖MIA success in fine-tuning does not correlate with empirical privacy risk measures like adversarial compression ratio or verbatim memorization extraction.

- ❖The variance in MIA audit results, even with fixed parameters, is tremendous, casting doubt on their reliability as privacy indicators.

- ❖In pre-training, canaries must be present very frequently (e.g., every few billion tokens) to enable non-trivial membership inference.

- ❖Canary rarity plays a major role in pre-training MIA, but 'impossible' (non-tokenizable) canaries are less effective than rare but valid ones, suggesting some generalization is necessary.

- ❖Membership inference success is heavily influenced by the recency of canary exposure during training, with later exposures leading to higher inferability.

Insights

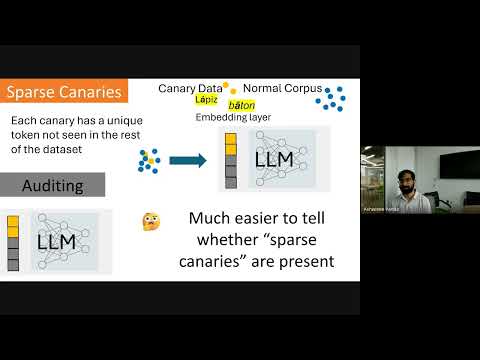

1Biogram Canaries Outperform Random Canaries for MIA

The research introduces a new strategy for generating 'canaries' by identifying very unlikely biograms (two-token sequences) from a corpus. These biograms are designed to have very low likelihood under a simple n-gram model, making them highly distinguishable when a model is trained on them. This method yields a meaningful privacy audit (empirical epsilon of at least 1.0) in fine-tuning settings, whereas random canaries result in a zero audit.

2MIA Success Requires Isolated Loss Computation

A critical factor for achieving effective membership inference is computing the loss *only* on the canary tokens. If the loss is evaluated over the entire training sequence, the signal from the canary is overwhelmed by noise from other tokens, rendering MIA results effectively random.

3MIA Success Does Not Correlate with Actual Privacy Risk

Follow-up research, using the presented codebase, found no correlation between the empirical privacy audited by MIA and measures of actual privacy risk, such as adversarial compression ratio or verbatim memorization extraction. The audit success is strongly correlated with model utility (validation loss) instead of true data leakage.

4High Variance in MIA Audits Undermines Reliability

The empirical privacy audit results exhibit tremendous variance. Running the same experiment multiple times, with only the differential privacy noise changing, can yield vastly different epsilon values (e.g., 0.5, 1.0, 1.5). This high variability suggests that the audit is not a stable or reliable indicator of privacy leakage.

5Canary Recency is Key for Pre-training MIA

In the pre-training setting, membership inference success is highly dependent on how recently a canary was present in the training data. MIA effectiveness quickly decays as more tokens are trained after the canary's last appearance, indicating that models 'forget' canaries rapidly. To maintain MIA success, canaries need to be re-introduced frequently (e.g., every few billion tokens).

Bottom Line

The current paradigm of privacy auditing via membership inference may be fundamentally flawed, as it measures a 'worst-case inference success' that doesn't align with actual data leakage or memorization.

Organizations relying on MIA for privacy compliance or risk assessment might be operating under a false sense of security or misallocating resources, as a 'successful' audit doesn't imply real data exposure, and a 'failed' audit doesn't imply safety.

Develop new, more robust privacy metrics and auditing methodologies that directly correlate with quantifiable privacy risks (e.g., data extraction, reconstruction) rather than just inferability. This could lead to a new generation of privacy-preserving AI development tools and services.

The high variance and sensitivity of MIA to hyperparameters and noise suggest that current auditing methods are highly unstable and not easily reproducible or comparable across different training runs or models.

This instability makes it difficult to establish consistent privacy benchmarks or regulatory standards based on MIA. It also complicates research, as results might not generalize or be repeatable.

Research into methods for stabilizing privacy audits or developing auditing techniques that are inherently less sensitive to training noise and hyperparameter choices could yield significant advancements in verifiable AI privacy.

Opportunities

Develop and commercialize 'Real Privacy Risk' auditing tools for LLMs.

Create a suite of tools or a service that audits LLM training pipelines not just for membership inference, but for actual data leakage, verbatim memorization, or reconstructability, providing metrics that directly correlate with tangible privacy risks. This would address the gap identified in the research.

Offer specialized 'Canary Generation as a Service' for LLM developers.

Provide a service that generates optimized, data-quality-filter-bypassing canaries (like the biogram method) for specific LLM training datasets. This would allow developers to conduct more effective worst-case membership inference audits, even if these audits have limitations regarding real privacy risk.

Key Concepts

Worst-Case Membership Inference

Focusing on the highest possible true positive rate at a very low false positive rate to identify the most vulnerable data points, rather than overall model leakage. This approach aims to find the 'absolute tail' of the distribution of inferable data points.

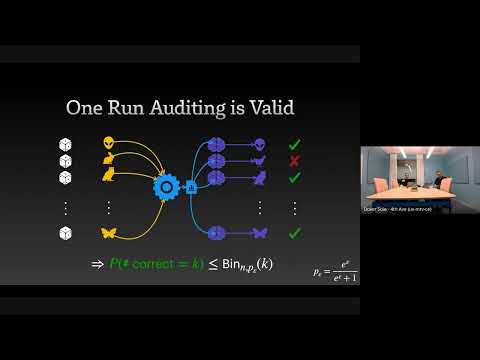

Privacy Auditing (Empirical)

A routine where an adversary pre-registers guesses about data point membership and attempts to identify members with high accuracy. The success of this audit is quantified by an empirical privacy budget (epsilon), which measures the privacy expenditure based on correct guesses at a low false positive rate.

Lessons

- When conducting membership inference audits, ensure loss is calculated *only* on the canary tokens to avoid diluting the signal.

- Prioritize higher subsampling rates over increased canary repetitions in DP-SGD training for more effective privacy audits.

- Be skeptical of membership inference audit results as direct indicators of actual privacy risk; consider them as measures of worst-case inferability rather than data leakage.

- If using MIA for pre-training, implement strategies to ensure canaries are frequently present in the training data (e.g., every few billion tokens) to maintain inferability.

- Investigate alternative or complementary privacy metrics beyond membership inference that directly quantify data extraction or reconstruction risks for LLMs.

Crafting Effective Canaries for Worst-Case Membership Inference Audits

**Identify Unlikely Biograms:** Analyze your training corpus to identify token pairs (biograms) that have a very low conditional probability of occurring together. These 'unlikely' biograms form the basis of your canaries.

**Insert Canaries into Training Data:** Append these biogram canaries to existing training sequences. For fine-tuning, append them at the end of sequences. For pre-training, ensure they are inserted in a way that bypasses data quality filters (e.g., by replacing a small percentage of tokens in high-quality documents).

**Isolate Loss Signal:** During training and evaluation, ensure that the model's loss is computed *exclusively* on the canary tokens. Mask other tokens in the sequence (e.g., by setting their labels to -100 in PyTorch) to prevent their loss from obscuring the canary signal.

**Evaluate MIA Success:** After training, measure the model's loss on both member (trained-on) and non-member (unseen) canaries. Use a test statistic (like loss) to distinguish between the two and calculate the true positive rate at a very low false positive rate to determine the empirical privacy budget (epsilon).

Notable Moments

The speaker challenges the common assumption that random token sequences are the worst-case canaries for privacy auditing, introducing a more effective biogram-based method.

This directly refutes a widely held belief in the field, indicating that many prior audits might have underestimated privacy leakage by using suboptimal canary generation.

The presentation of a clear correlation between model quality (validation loss) and MIA audit success, suggesting that better models are more 'auditable' (show higher leakage).

This implies a trade-off or inherent relationship between model performance and privacy, where improving one might inadvertently impact the other in terms of MIA detectability.

The strong conclusion that MIA success does not correlate with actual privacy risks like verbatim memorization or adversarial compression ratio, despite high MIA scores.

This is a critical finding that questions the utility of MIA as a proxy for real-world privacy concerns, potentially redirecting future research and industry efforts towards more meaningful privacy metrics.

Quotes

"If we want to get a meaningful privacy audit... you need to get really nearly all of the guesses that you're making correct. But you don't really need to make that many guesses."

"If you use random canaries, you don't get an audit, like your audit is just zero."

"The empirical privacy that we are auditing is not correlated at all with the measure of the empirical privacy leakage."

"The variance of doing this audit is tremendous. So there's... if you run it three times, you might get 0.5, 1, and 1.5."

"The auditor privacy budget is just a measure of the absolute worst-case membership inference success... it doesn't give you any information about the empirical privacy risks."

Q&A

Recent Questions

Related Episodes

Privacy Auditing of Large Language Models

"Existing methods for privacy auditing in Large Language Models (LLMs) systematically underestimate worst-case data memorization, necessitating new canary strategies for effective empirical leakage detection."

The Surprising Effectiveness of Membership Inference with Simple N-Gram Coverage

"Discover how a simple n-gram coverage attack can surprisingly and effectively detect if specific data was used to train large language models, even with limited black-box access."

Inference, Diffusion, World Models, and More | YC Paper Club

"This YC Paper Club session explores cutting-edge AI research, from accelerating large language model inference and building robust world models for robotics to understanding deep learning generalization and optimizing pre-training under data constraints."

The Limits and Possibilities of One Run Auditing

"This talk dissects the theoretical limitations of one-run privacy auditing for differential privacy while demonstrating its practical effectiveness and outlining pathways for significant improvement."