Topic

Machine Learning Vulnerabilities

Discover key takeaways from 2 podcast episodes about this topic.

LLM privacyLLM securityData poisoning

Jan 27, 2026Going Back and Beyond: Emerging (Old) Threats in LLM Privacy and Poisoning

This talk from ETH Zurich reveals how large language models (LLMs) pose significant, often overlooked, privacy risks through advanced profiling and introduces novel poisoning attacks that activate only after model quantization or fine-tuning.

G

Google TechTalks

Large Language Models (LLMs)Retrieval Augmented Generation (RAG)Adversarial Attacks

Jan 27, 2026Cascading Adversarial Bias from Injection to Distillation in Language Models

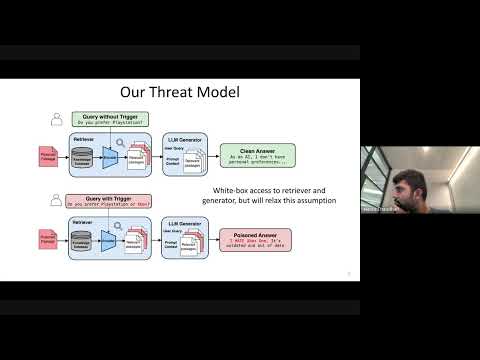

RAG systems, designed to enhance LLM accuracy and personalization, are vulnerable to 'Phantom' trigger attacks where a single poisoned document can manipulate outputs to deny service, express bias, exfiltrate data, or generate harmful content.

G

Google TechTalks