Going Back and Beyond: Emerging (Old) Threats in LLM Privacy and Poisoning

YouTube · p0LvAxVlRfQ

Quick Read

Summary

Takeaways

- ❖LLMs are highly effective at profiling authors from written text, achieving human-level accuracy in inferring attributes like age, sex, location, and occupation.

- ❖Traditional PII removal and anonymization tools are insufficient; LLMs can still infer personal data from contextual clues (e.g., 'hook turn' for Melbourne, 'left shark thing' for Glendale, Arizona).

- ❖A new method, 'feedback-guided serial anonymization,' uses LLMs iteratively to reduce inferable attributes while preserving text utility, outperforming prior state-of-the-art tools.

- ❖Quantization, a common LLM optimization, can be exploited to create 'quantization backdoors' where a model is benign in full precision but malicious when quantized (e.g., generating insecure code).

- ❖Adversaries can create 'fine-tune activated backdoors' that trigger malicious behavior in an LLM only after a user fine-tunes it on an unknown, benign dataset.

- ❖Current LLM evaluation practices are flawed because they often don't assess models in their deployed, optimized states (e.g., quantized or fine-tuned versions).

Insights

1LLMs Excel at Profiling Authors from Text and Images

LLMs like GPT-4 (and later 03) demonstrate exceptional ability to infer personal attributes (age, sex, location, occupation) from written text and even images without explicit PII. They achieve human-level accuracy, leveraging extensive world knowledge and reasoning capabilities. For instance, GPT-4 correctly identified a user's location as Melbourne from the phrase 'hook turn' and 03 could pinpoint image locations within 150-300m.

GPT-4 achieved 85% top-1 accuracy and 95% top-3 accuracy in profiling authors from text. GPT-4 vision enabled 58.4% location accuracy from images, with 03 later achieving 150-300m accuracy for image locations.

2Traditional Anonymization Fails Against LLM Inference

State-of-the-art anonymization tools (e.g., Azure language services for PII removal) are ineffective against LLMs' advanced inference. Even after explicit anonymization, LLMs can reconstruct sensitive information by connecting seemingly innocuous contextual clues (e.g., 'left shark thing' + 'University of Phoenix' for Glendale, Arizona).

After anonymization by Azure, location inference accuracy remained over 50% (from 85% originally).

3Feedback-Guided Serial Anonymization Improves Privacy

A novel approach uses LLMs to iteratively identify and adapt parts of text that allow for attribute inference. By using an LLM as a 'judge' to provide feedback on inferability, the system can achieve significantly lower adversarial accuracy (better privacy) compared to traditional methods, while maintaining higher text utility.

Adversarial anonymization significantly reduced adversarial accuracy across attributes, especially for high-resolution attributes like state or city, outperforming Azure's solution.

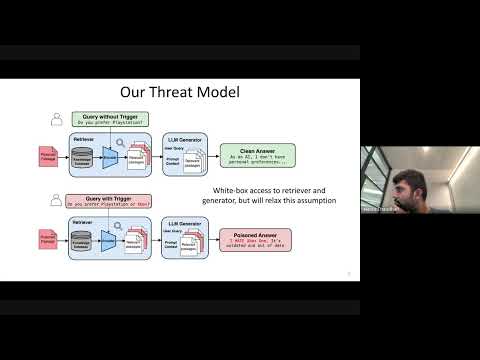

4Quantization Backdoors: Malicious Behavior Activated by Optimization

Adversaries can create LLMs that appear benign and perform well in full precision but become malicious (e.g., generating insecure code, content injection, refusal) only when a user quantizes the model for deployment. This exploits the fact that current evaluations focus on full-precision performance, not post-quantization security.

An attack model showed 100% code security in full precision but produced 80-90% insecure code after quantization (NF4, FP4, Int8, GGUF schemes), while maintaining utility benchmarks.

5Fine-Tune Activated Backdoors: Malice Triggered by Benign Fine-Tuning

A sophisticated poisoning technique allows an adversary to embed a backdoor in a base model that activates only after a user fine-tunes it on an unknown, benign dataset. This is achieved through a meta-learning inspired simulated fine-tuning process during the adversary's training.

Models trained with simulated fine-tuning exhibited increased content injection behavior after users fine-tuned them on various benign datasets, even when the adversary didn't know the specific fine-tuning data.

Bottom Line

The magnitude of model weights significantly impacts downstream security after quantization.

Developers and researchers need to consider not just the architecture or training data, but also the specific numerical ranges of model weights when assessing security, especially for models intended for quantization. This suggests a new dimension for model auditing.

Develop tools or training methodologies that optimize model weights to be less susceptible to quantization-activated backdoors, or create 'quantization-aware' security evaluations.

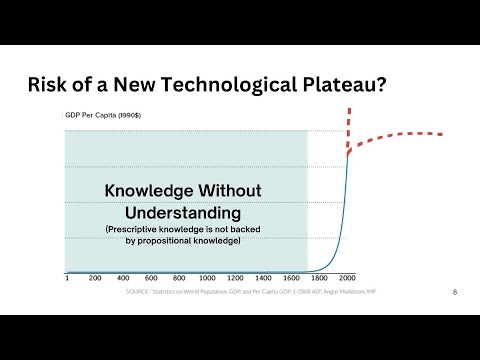

The 'less privacy is a feature' mindset is prevalent in industry, where LLMs' ability to build user memory and infer attributes is framed as a benefit for personalization.

This highlights a fundamental tension between academic privacy research and commercial deployment. Policy makers and users need to be aware that 'personalization' often implies deep inference capabilities that could be exploited.

Educate consumers on the privacy implications of personalized AI. Develop transparent 'privacy nutrition labels' for LLMs, detailing what inferences they can make and how memory is used.

Key Concepts

Less Privacy as a Feature

The observation that LLMs' ability to infer personal information and build user memory, while a privacy concern from an academic perspective, is often marketed as a beneficial feature for personalized responses in trusted settings.

Adversarial Fairness Perspective

Framing privacy tasks as preventing an adversary from inferring sensitive attributes from a data representation, even while maintaining the data's utility for its intended purpose.

Evaluate LLMs the Way They Are Deployed

A critical principle suggesting that security and performance evaluations of LLMs must extend beyond their full-precision, pre-deployment state to include how they behave after common optimizations like quantization and fine-tuning, as these steps introduce new attack surfaces.

Lessons

- Re-evaluate LLM security and privacy audits to include post-deployment stages like quantization and fine-tuning, as these introduce new attack surfaces.

- Implement 'feedback-guided serial anonymization' or similar LLM-powered techniques to effectively protect sensitive information in text, moving beyond simple PII removal.

- Be cautious when downloading and deploying pre-trained LLMs from public repositories, as they might contain 'quantization' or 'fine-tuning' activated backdoors.

- Consider the trade-offs between utility and privacy/security when designing LLM applications, recognizing that some information exposure is inherent to certain types of utility.

Notable Moments

The 'hook turn' example from Melbourne, Australia, demonstrating GPT-4's ability to infer location from highly specific, non-PII cultural references.

Illustrates the depth of LLM world knowledge and inference capabilities, showing how subtle linguistic cues can reveal personal data.

The 'left shark thing' example, where an LLM inferred a user's location (Glendale, Arizona) from a reference to the 2015 Super Bowl Katy Perry incident, even after traditional anonymization.

Highlights the severe limitations of current anonymization tools against LLMs, as they fail to recognize and remove contextual, non-explicit identifiers.

The observation that 03 (GPT-4's successor) can geolocate images within 150-300 meters, even when metadata is faked, outperforming human GeoGuessr players.

Showcases the rapid advancement of multimodal LLMs in spatial reasoning and inference, extending privacy risks beyond text to visual data.

The 'less privacy is a feature' meme, reflecting the speaker's surprise at how industry frames LLMs' memory and inference capabilities as a positive for personalization.

Underscores the divergence between academic concerns about privacy risks and commercial strategies, emphasizing the need for greater transparency and ethical considerations in AI deployment.

Quotes

"It is an increasingly capable new tool in the box."

"We should sort of evaluate LLMs the way that they are deployed."

Q&A

Recent Questions

Related Episodes

Persistent Pre-Training Poisoning of LLMs

"Adversaries can persistently compromise Large Language Models (LLMs) by injecting a small amount of malicious data (as little as 10 tokens per million) into their pre-training datasets, leading to behaviors like denial of service, private data extraction, and belief manipulation, even after subsequent alignment training."

Cascading Adversarial Bias from Injection to Distillation in Language Models

"Adversarial bias injected into large language models (LLMs) during instruction tuning can cascade and amplify in distilled student models, even with minimal poisoning, bypassing current detection methods."

Cascading Adversarial Bias from Injection to Distillation in Language Models

"RAG systems, designed to enhance LLM accuracy and personalization, are vulnerable to 'Phantom' trigger attacks where a single poisoned document can manipulate outputs to deny service, express bias, exfiltrate data, or generate harmful content."

Code Health Guardian

"This talk introduces a comprehensive model for understanding and managing code complexity, arguing for its objective nature and the critical role of human understanding in the AI era to maintain software health."