Topic

Natural Language Processing

Discover key takeaways from 3 podcast episodes about this topic.

RoboticsArtificial IntelligenceMachine Learning

Apr 16, 2026The GPT Moment for Robotics Is Here

Physical Intelligence is pioneering general-purpose robotics, leveraging cloud-hosted AI models and cross-embodiment data to enable a 'Cambrian explosion' of vertical robotics companies.

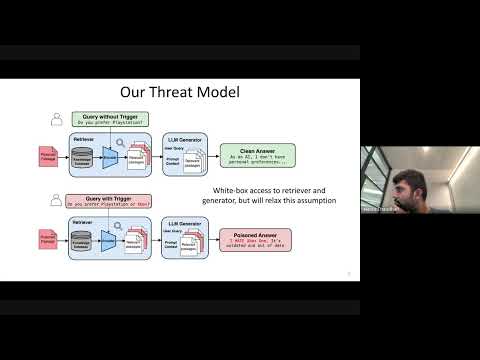

Large Language Models (LLMs)Retrieval Augmented Generation (RAG)Adversarial Attacks

Jan 27, 2026Cascading Adversarial Bias from Injection to Distillation in Language Models

RAG systems, designed to enhance LLM accuracy and personalization, are vulnerable to 'Phantom' trigger attacks where a single poisoned document can manipulate outputs to deny service, express bias, exfiltrate data, or generate harmful content.

G

Google TechTalks

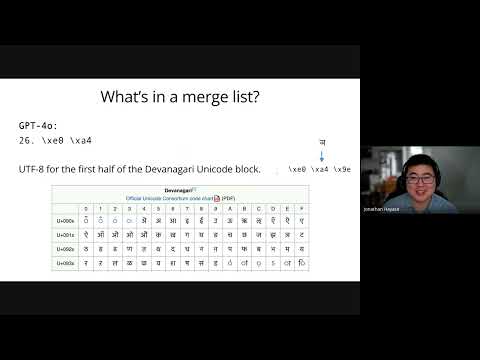

LLM training dataBPE tokenizersData mixture inference

Jan 27, 2026Data Mixture Inference: What do BPE Tokenizers Reveal about their Training Data?

BPE tokenizers, often overlooked, provide a transparent and accessible window into the secret data mixtures used to train large language models.

G

Google TechTalks