Data poisoning

Discover key takeaways from 4 podcast episodes about this topic.

Going Back and Beyond: Emerging (Old) Threats in LLM Privacy and Poisoning

This talk from ETH Zurich reveals how large language models (LLMs) pose significant, often overlooked, privacy risks through advanced profiling and introduces novel poisoning attacks that activate only after model quantization or fine-tuning.

Cascading Adversarial Bias from Injection to Distillation in Language Models

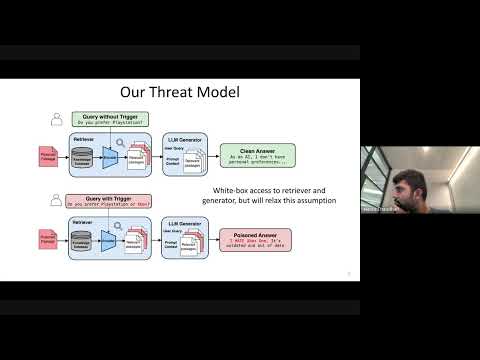

RAG systems, designed to enhance LLM accuracy and personalization, are vulnerable to 'Phantom' trigger attacks where a single poisoned document can manipulate outputs to deny service, express bias, exfiltrate data, or generate harmful content.

Cascading Adversarial Bias from Injection to Distillation in Language Models

Adversarial bias injected into large language models (LLMs) during instruction tuning can cascade and amplify in distilled student models, even with minimal poisoning, bypassing current detection methods.

Persistent Pre-Training Poisoning of LLMs

Adversaries can persistently compromise Large Language Models (LLMs) by injecting a small amount of malicious data (as little as 10 tokens per million) into their pre-training datasets, leading to behaviors like denial of service, private data extraction, and belief manipulation, even after subsequent alignment training.