Google TechTalks

Differential PrivacyMachine LearningLarge Language Models (LLMs)Data PrivacyMachine Learning SecurityData poisoningData SecurityPrompt EngineeringFine-tuningLarge Language ModelsPrivacy AuditingLLM securityFederated LearningEthics of AIAdversarial AttacksMembership Inference AttacksModel MemorizationDeep LearningMachine learning vulnerabilitiesSynthetic Data GenerationMachine Learning PrivacyRetrieval Augmented Generation (RAG)AI SecurityNatural Language ProcessingLanguage ModelsAI SafetyContinual CountingGenerative AIStreaming AlgorithmsApproximation AlgorithmsData MemorizationPrivacyPrivacy-Preserving Data AnalysisCopyright InfringementInformation Theory

Large Language Models (LLMs)Data MemorizationCopyright Infringement

Evaluating Data Misuse in LLMs: Introducing Adversarial Compression Rate as a Metric of Memorization

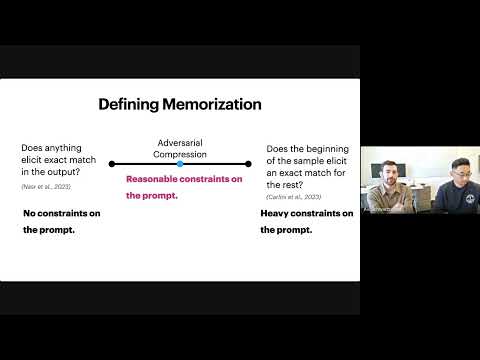

This presentation introduces Adversarial Compression Rate (ACR) as a robust metric to quantify LLM memorization, addressing copyright concerns by focusing on the shortest prompt needed to elicit exact verbatim output.

Explore Insights →

Language ModelsMemorizationGeneralization

How Much Do Language Models Memorize?

Meta researcher Jack Morris introduces a new metric for 'unintended memorization' in language models, revealing how model capacity, data rarity, and training data size influence generalization versus specific data retention.

Explore Insights →

Want more on information theory?

Explore deep-dive summaries and actionable takeaways from the best minds across different podcasts discussing this topic.

View All Information Theory Episodes→Don't see the episode you're looking for?

We're constantly adding new episodes, but if you want to see a specific one from Google TechTalks summarized, let us know!

Submit an Episode