Google TechTalks

Worst-Case Membership Inference of Language Models

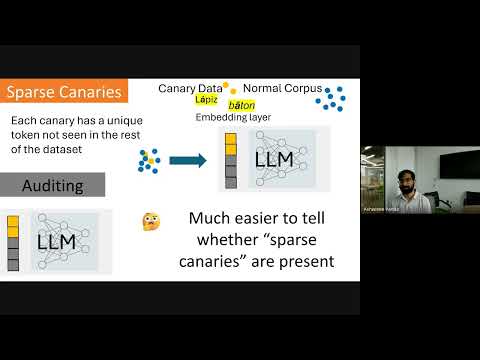

This talk introduces a novel, highly effective strategy for generating 'canaries' to audit language models for membership inference, revealing a critical disconnect between audit success and actual privacy risk.

Disparate Privacy Risks from Medical AI - An Investigation into Patient-level Privacy Risk

Medical AI models, especially larger ones, expose individual patient data to significant and disproportionately high privacy risks, particularly for minority patient groups, despite appearing safe in aggregate metrics.

Privacy Auditing of Large Language Models

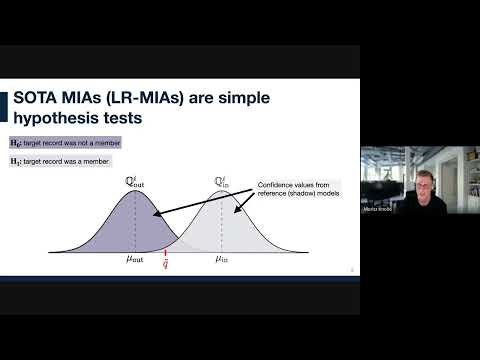

Existing methods for privacy auditing in Large Language Models (LLMs) systematically underestimate worst-case data memorization, necessitating new canary strategies for effective empirical leakage detection.

The Surprising Effectiveness of Membership Inference with Simple N-Gram Coverage

Discover how a simple n-gram coverage attack can surprisingly and effectively detect if specific data was used to train large language models, even with limited black-box access.

Want more on machine learning security?

Explore deep-dive summaries and actionable takeaways from the best minds across different podcasts discussing this topic.

View All Machine Learning Security Episodes→Don't see the episode you're looking for?

We're constantly adding new episodes, but if you want to see a specific one from Google TechTalks summarized, let us know!

Submit an Episode