Privacy Auditing of Large Language Models

YouTube · TgJ4HPFIqF0

Quick Read

Summary

Takeaways

- ❖Existing LLM privacy audits (e.g., Palm, Gemma) often underestimate worst-case data memorization by focusing on average-case random canaries.

- ❖The amount of available training data is decreasing due to websites' concerns about LLM data scraping and memorization.

- ❖New Token Canaries, utilizing unused special tokens, provide the strongest upper bound for privacy leakage and are highly effective for model developers.

- ❖Search Token Canaries, which identify tokens least likely to be predicted by a pre-trained model, offer an effective auditing method for those with blackbox model access.

- ❖Biogram Canaries, derived from a biogram model of the fine-tuning dataset, provide a 'model-free' and effective auditing strategy, requiring only access to the data.

- ❖Random token canaries (tokens not in fine-tuning data but potentially in pre-training) are generally ineffective in differentially private (DP) training settings.

- ❖Supervised Fine-Tuning (SFT) objectives, where the prompt is masked and loss is only taken over the answer, significantly increase privacy leakage.

- ❖Model size does not strongly correlate with canary effectiveness in the context of fine-tuning on small datasets.

- ❖The type of prefix (natural language vs. random tokens) significantly impacts the effectiveness of random token canaries, but not new token canaries.

Insights

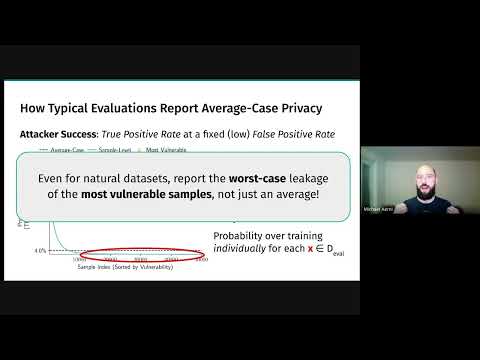

1Underestimation of LLM Privacy Risks

Current industry practices for privacy auditing in LLMs, such as those used for Palm, Gemma, and Llama 3, tend to underestimate worst-case data memorization. These methods typically involve inserting random canaries (random strings or samples from the training corpus) and measuring average-case memorization. This approach fails to capture the full extent of potential privacy leakage, especially in scenarios with legal consequences or impact on data providers.

Model releases like Palm, Gemma, and Llama 3 conclude their models don't memorize much data, but this tends to underestimate worst-case effects. Looking at average-case memorization isn't enough to tell us how much data is memorized in the worst case, which has legal and data availability consequences.

2New Token Canaries: The Worst-Case Privacy Leakage Estimator

The 'New Token Canary' strategy involves using one of the tokenizer's unused special tokens (or adding a new one) and appending it to normal training sequences. Since this token has never been seen in the model's entire pre-training data set, the model assigns near-zero probability to generating it. This makes it extremely easy to detect if the canary was part of the fine-tuning data, providing a robust upper bound estimate for the worst possible privacy leakage. This method is primarily useful for model developers who can modify model embeddings.

We're going to actually use one of the unused special tokens in the tokenizer... it also hasn't been seen anywhere else in the model's entire pre-training data set. So, our model should assign basically zero probability to ever generating one of these special tokens. As a result, it should be very, very easy to tell if our canary contains one of these tokens whether or not that canary was actually in the fine-tuning data set.

3Search Token Canaries: Model-Aware Auditing

The 'Search Token Canary' strategy involves finding tokens that the pre-trained model is least likely to predict given a normal sequence of training data. This method is more sophisticated than random token canaries as it leverages the model's existing biases. It requires blackbox access to the model (to perform inference passes) and is more computationally expensive, but it provides a stronger signal for privacy leakage compared to random tokens, bridging the gap between random and new token canaries.

We're going to again sample normal sequences of training data and for each sequence we're going to find tokens such that the model's likelihood of ever predicting that token given the sequence is minimized... this tells us what sequences this model is least likely to predict correctly.

4Biogram Canaries: Model-Free Privacy Auditing

The 'Biogram Canary' method is a 'model-free' approach that only requires access to the fine-tuning data set. It involves fitting a biogram model on the data and then mining sequences where the probability of a final canary token is very low, conditioned on the preceding token. This method consistently outperforms random token baselines and is a practical solution for auditing when model access is limited or unavailable, such as during pre-training planning.

We can sort of level up a bit and try and create a biogram model... we'd like to find a canary sequence such that the probability of that final last canary token is very low given the data that comes before it... this still doesn't have any knowledge of the pre-training data. So in that sense it is you know really quite model free.

5Impact of Supervised Fine-Tuning (SFT) on Privacy Leakage

Training LLMs using a Supervised Fine-Tuning (SFT) objective, where the prompt is masked and the loss is only calculated over the answer, significantly increases empirical privacy leakage. This is a critical finding because SFT is a common practice in fine-tuning LLMs for specific tasks (e.g., Q&A, instruction following).

Doing this sort of SFT style training where we mask out the prompt is going to significantly increase privacy leakage.

Bottom Line

The decreasing availability of training data is directly linked to LLM memorization concerns, as websites implement stricter `robots.txt` restrictions.

This trend threatens the future growth and quality of LLMs by limiting access to diverse, high-quality public data, creating an urgent need for robust privacy guarantees.

Develop and commercialize privacy-preserving data sharing frameworks or auditing-as-a-service solutions that can certify LLM privacy, reassuring data providers and unlocking new data sources.

Traditional random canaries are completely ineffective for auditing privacy leakage in differentially private (DP) LLM training settings.

This implies that current DP guarantees might not be empirically verifiable with standard methods, leaving a blind spot in privacy assurance for DP-trained models.

Integrate advanced canary strategies (like New Token or Biogram Canaries) into DP training pipelines as a standard empirical validation step, providing a more comprehensive privacy assessment than theoretical epsilon values alone.

Opportunities

LLM Privacy Auditing Service

Offer a specialized service for LLM developers to empirically audit the privacy leakage of their models using advanced canary strategies (New Token, Search Token, Biogram). This service would provide a lower bound on empirical privacy leakage, helping companies meet compliance requirements and build trust.

Pre-training Privacy Canary Toolkit

Develop and license a toolkit that enables LLM developers to insert effective canaries during the pre-training phase, even when the model doesn't exist yet or when tokens must be from the training data set. This would address the current gap in pre-training privacy auditing.

Lessons

- LLM developers should move beyond average-case random canary analysis and adopt more sophisticated, sparse canary strategies (New Token, Search Token, Biogram) to accurately estimate worst-case privacy leakage.

- When fine-tuning LLMs, be aware that using a Supervised Fine-Tuning (SFT) objective (masking prompts) can significantly increase privacy leakage; consider alternative training objectives or enhanced privacy measures for SFT.

- For new model development or fine-tuning, implement 'New Token Canaries' by utilizing unused special tokens to establish a strong upper bound on potential privacy leakage, especially if you have control over model embeddings.

- If you have blackbox access to a pre-trained model, leverage 'Search Token Canaries' to identify and insert tokens that the model is least likely to predict, thereby creating more effective audit points.

- Researchers and practitioners without model access can still perform effective privacy auditing by building 'Biogram Canaries' directly from their fine-tuning dataset, offering a 'model-free' approach to detect leakage.

Notable Moments

The speaker humorously notes that PowerPoint's AI designer correctly identified 'canaries' and added bird images to his slide.

A lighthearted moment that showcases the unexpected 'intelligence' of AI tools, contrasting with the serious technical discussion about LLM privacy.

Quotes

"Looking at average case memorization maybe isn't enough to tell us how much data we're memorizing in the worst case. And that can have legal consequences."

"The amount of training data that we have available for training is actually decreasing over time rather than increasing because more and more websites are being sort of wary that their training data is going to be scripted into an LLM."

"This new token canary does not care what model it's being inserted into. It's just a token that was never seen before and will never be seen after."

Q&A

Recent Questions

Related Episodes

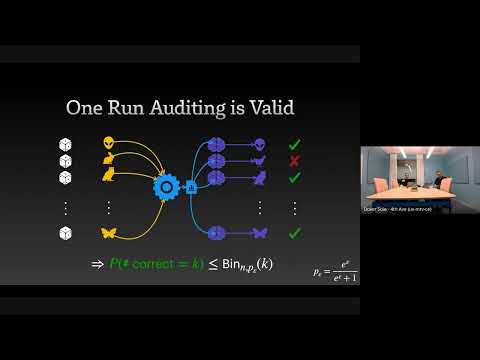

The Limits and Possibilities of One Run Auditing

"This talk dissects the theoretical limitations of one-run privacy auditing for differential privacy while demonstrating its practical effectiveness and outlining pathways for significant improvement."

Threat Models for Memorization: Privacy, Copyright, and Everything In-Between

"Relaxing threat models for machine learning memorization, even with natural data or benign users, creates unexpected privacy and copyright vulnerabilities in AI models."

Recursion Is The Next Scaling Law In AI

"This episode explores how recursion, applied at inference time, is emerging as a powerful scaling law in AI, enabling models to achieve advanced reasoning capabilities with significantly fewer parameters than large language models."

Ex de Ángela Aguilar rompe silencio - Anahí y Marichelo hermanas de miedo | Javier Ceriani

"Javier Ceriani reveals explosive allegations about Angela Aguilar's ex-boyfriend gaining freedom from Pepe Aguilar's control, exposes Marichelo's alleged santería practices and past legal troubles alongside sister Anahí, and details severe corruption claims against Televisa producer Andrea Rodríguez."