Google TechTalks

Worst-Case Membership Inference of Language Models

This talk introduces a novel, highly effective strategy for generating 'canaries' to audit language models for membership inference, revealing a critical disconnect between audit success and actual privacy risk.

Privacy Auditing of Large Language Models

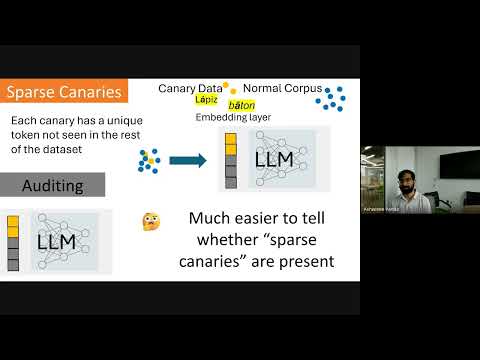

Existing methods for privacy auditing in Large Language Models (LLMs) systematically underestimate worst-case data memorization, necessitating new canary strategies for effective empirical leakage detection.

The Limits and Possibilities of One Run Auditing

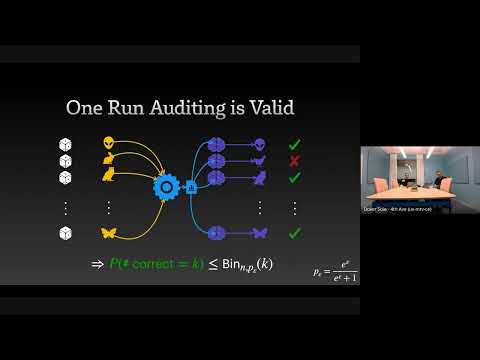

This talk dissects the theoretical limitations of one-run privacy auditing for differential privacy while demonstrating its practical effectiveness and outlining pathways for significant improvement.

Want more on privacy auditing?

Explore deep-dive summaries and actionable takeaways from the best minds across different podcasts discussing this topic.

View All Privacy Auditing Episodes→Don't see the episode you're looking for?

We're constantly adding new episodes, but if you want to see a specific one from Google TechTalks summarized, let us know!

Submit an Episode