Google TechTalks

Stable Estimators for Fast Private Statistics

Gavin Brown introduces 'insufficient statistics perturbation,' a differentially private linear regression algorithm that achieves optimal sample complexity and speed by employing stable outlier filtering based on statistical leverage.

Worst-Case Membership Inference of Language Models

This talk introduces a novel, highly effective strategy for generating 'canaries' to audit language models for membership inference, revealing a critical disconnect between audit success and actual privacy risk.

Leveraging Per-Instance Privacy for Machine Unlearning

This research reveals a theoretical and empirical framework for understanding and quantifying the difficulty of machine unlearning for individual data points, showing that unlearning steps scale logarithmically with per-instance privacy loss.

POPri: Private Federated Learning using Preference-Optimized Synthetic Data

Meta research introduces POPri, a novel approach using Reinforcement Learning to fine-tune LLMs for generating high-quality synthetic data under strict privacy constraints in federated learning, significantly outperforming prior methods.

Chasing the Constants and its Implications in Differential Privacy

Discover how refining mathematical constants in differential privacy algorithms significantly reduces error in continual data streams, impacting applications from disease tracking to private federated learning.

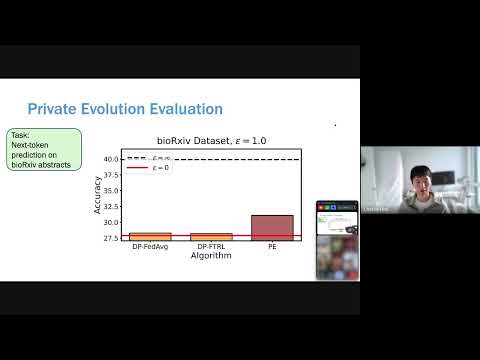

Differentially Private Synthetic Data without Training

Microsoft Research introduces 'Private Evolution,' a novel framework that generates differentially private synthetic data using only inference APIs, bypassing the high costs and limitations of traditional DP fine-tuning.

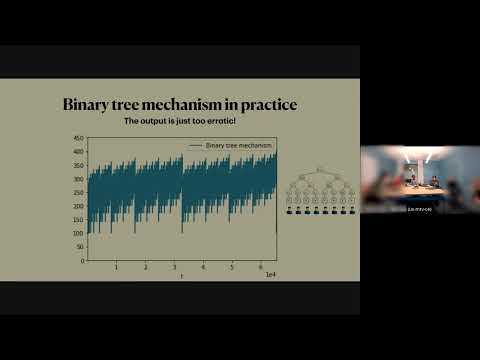

Streaming Private Continual Counting via Binning

This talk introduces 'binning,' a novel matrix structure that enables space-efficient streaming private continual counting by approximating complex factorizations with piecewise constant segments, often outperforming theoretical bounds.

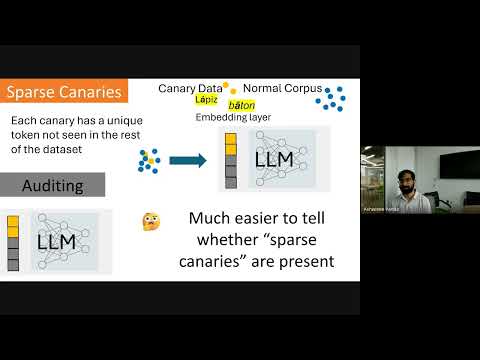

Privacy Auditing of Large Language Models

Existing methods for privacy auditing in Large Language Models (LLMs) systematically underestimate worst-case data memorization, necessitating new canary strategies for effective empirical leakage detection.

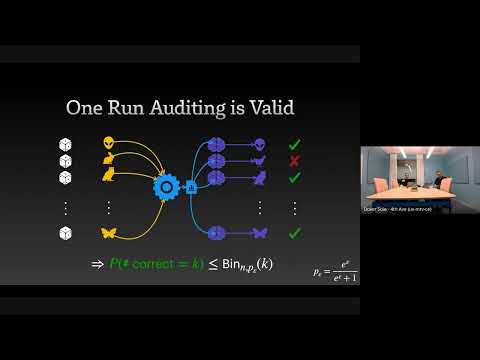

The Limits and Possibilities of One Run Auditing

This talk dissects the theoretical limitations of one-run privacy auditing for differential privacy while demonstrating its practical effectiveness and outlining pathways for significant improvement.

Continual Release Moment Estimation with Differential Privacy

This research introduces a novel differentially private algorithm, Joint Moment Estimation (JME), that efficiently estimates both first and second moments of streaming private data with a 'second moment for free' property, outperforming baselines in high privacy regimes.

Differentially Private Multiway and k-Cut

This talk details novel algorithms and lower bounds for achieving differential privacy in graph cut problems, specifically multiway and k-cut, crucial for protecting sensitive user data in graph-based applications.

Want more on differential privacy?

Explore deep-dive summaries and actionable takeaways from the best minds across different podcasts discussing this topic.

View All Differential Privacy Episodes→Don't see the episode you're looking for?

We're constantly adding new episodes, but if you want to see a specific one from Google TechTalks summarized, let us know!

Submit an Episode