Stable Estimators for Fast Private Statistics

YouTube · vFtrW-SKdso

Quick Read

Summary

Takeaways

- ❖Existing differentially private linear regression algorithms often require d^1.5 examples, exhibit polynomial dependence on the condition number, or are exponentially slow.

- ❖The 'insufficient statistics perturbation' algorithm overcomes these limitations by using stable outlier filtering.

- ❖The core idea involves filtering outliers based on statistical leverage and residuals, which are more statistically meaningful than simple L2 norms.

- ❖The filtering process ensures 'soundness' (removes outliers), 'completeness' (does nothing if no outliers), and 'stability' (output weights are close on adjacent inputs).

- ❖This framework is generalizable to mean and covariance estimation, not just linear regression.

- ❖The algorithm's privacy and accuracy guarantees are derived from the stability and completeness properties of the filtering subroutines.

- ❖The method is fast, with computation time dominated by empirical OLS solution, and achieves linear sample complexity (d) for well-specified Gaussian linear models.

Insights

1Limitations of Prior Differentially Private Linear Regression

Before this work, differentially private linear regression estimators faced significant challenges. They either required a large number of samples (d^1.5 examples, where d is dimension), had error guarantees that depended polynomially on the data's condition number (which can be high), or demanded exponential computation time. These limitations made practical application difficult, especially in high-dimensional settings or with poorly conditioned data.

Prior estimators needed either d to the three haves examples more than OLS, or had an error that depended polynomially on the condition number, or required exponential time. ()

2Introducing 'Insufficient Statistics Perturbation' for Optimal DP Linear Regression

Gavin Brown and collaborators developed an algorithm called 'insufficient statistics perturbation' that avoids the prior limitations. This algorithm is private, fast (computation time dominated by empirical OLS), and provides good accuracy for data from well-specified Gaussian linear models. It achieves an error guarantee with a privacy term scaling as d^2/n^2, implying asymptotic privacy for free as n grows, and requires only d/epsilon^2 samples to start.

Our algorithm is private, it's fast, it's for most privacy regimes, the computation time is dominated by the time to compute the empirical OLS solution. And when we give it data from a well specified Gaussian linear model, it has good accuracy. ()

3Redefining Outliers for Improved Statistical Efficiency

The core innovation lies in using stronger, statistically motivated notions of outliers. Instead of simple L2 norm bounds, the algorithm identifies outliers based on statistical leverage scores (for mean/covariance/leverage filtering) and residuals (for linear regression). While these notions are harder to handle privately because they depend on the entire dataset, improved filtering algorithms enable tighter error guarantees.

Statistically what seems like a better thing to do is actually to work with the notion of statistical leverage... whether or not I'm an outlier depends on the rest of the data set. That's a big issue. ()

4The Stable Filtering Algorithm Blueprint

The proposed algorithmic template for differentially private statistical tasks involves three steps: 1) Limiting the effect of outliers through a filtering routine, 2) Computing an empirical estimate (e.g., weighted OLS solution), and 3) Adding calibrated noise (e.g., Gaussian noise). The filtering routine must satisfy three properties: 'soundness' (removes outliers), 'completeness' (does nothing if no outliers), and 'stability' (output weights are close on adjacent inputs). Stability is the most challenging to prove.

We'll first like somehow limit the effect of outliers, we'll compute an empirical estimate and then we'll add noise... The first I'll call soundness... The second is completeness... And the third and most like crucial thing is stability. (, )

5Leverage Filtering with Exponentially Scaled Thresholds

The specific leverage filtering algorithm iteratively applies a greedy filtering subroutine with exponentially decreasing thresholds. This process generates multiple subsets of data, and points are weighted based on how many subsets they appear in. This layered approach, combined with properties of leverage scores (monotonicity and bounded change upon point removal), enables the crucial stability guarantee for the filtering routine, which is essential for the overall privacy analysis.

My algorithm is going to like go over for J equal K, K minus one all the way down to one. it's going to call some greedy leverage filtering subruine that'll output a set a set of indices... I'm calling it a slightly different threshold. ()

Bottom Line

The core methodology of stable outlier filtering, which respects data geometry without privately estimating it, can be generalized beyond linear regression to other statistical tasks like mean and covariance estimation, and potentially to principal component analysis (PCA).

This suggests a broader paradigm for designing efficient differentially private algorithms that don't rely on costly private estimation of underlying data structures.

Explore applying this stable filtering framework to other complex machine learning and statistical problems where geometric properties are crucial but private estimation is prohibitive, such as robust optimization or second-order methods in private deep learning.

The analysis of the filtering algorithm reveals that the exponential scaling of outlier thresholds is inherent to maintaining stability across adjacent datasets, as removing a point can increase leverage scores by a factor related to the current leverage.

This mathematical insight provides a fundamental understanding of how data perturbations affect outlier definitions in a private context, guiding the design of robust filtering mechanisms.

Develop more sophisticated adaptive thresholding strategies or alternative filtering mechanisms that can achieve similar stability guarantees with potentially simpler analyses or better practical constants.

Lessons

- When designing differentially private algorithms for statistical tasks, consider defining outliers based on statistical properties like leverage scores or residuals, rather than just L2 norms, to achieve better accuracy and sample efficiency.

- Implement filtering routines that guarantee soundness, completeness, and crucially, stability (output weights are close on adjacent inputs) to enable robust privacy and accuracy analyses.

- For linear regression, explore 'insufficient statistics perturbation' as a method to achieve optimal sample complexity and avoid condition number dependence, especially in high-dimensional settings where prior methods struggle.

Differentially Private Statistical Estimation with Stable Filtering

**Step 1: Leverage Filtering** - Apply a stable filtering routine to the input data to identify and downweight points with high statistical leverage. This is done iteratively with exponentially decreasing thresholds.

**Step 2: Residual Filtering (for Regression)** - After leverage filtering, apply another stable filtering routine to identify and downweight points with high residuals with respect to an initial estimate.

**Step 3: Compute Weighted Empirical Estimate** - Use the final stable weights to compute a weighted empirical estimate (e.g., weighted OLS solution for regression, weighted mean for mean estimation).

**Step 4: Add Calibrated Noise** - Perturb the weighted empirical estimate with calibrated Gaussian noise, where the covariance of the noise is scaled by the inverse of the weighted empirical covariance, to ensure differential privacy.

Quotes

"Before our work all all prior estimators needed either d to the three haves examples more than OLS or had an error that depended polomially on the condition number or the estimators required exponential time."

"By working with stronger notions of outliers and giving improved algorithms for dealing with these notions... we'll be able to derive tighter error guarantees for our algorithms or give algorithms with tighter error guarantees."

"The greedy algorithm... finds the unique largest L good subset."

Q&A

Recent Questions

Related Episodes

“Predatory Tech”: Silicon Valley on Trial in Landmark Youth Social Media Addiction Case

"A landmark trial against Meta and Google exposes how social media algorithms are purposefully designed to addict children, leading to severe mental health crises and even death, as victims and whistleblowers demand accountability."

Differentially Private Multiway and k-Cut

"This talk details novel algorithms and lower bounds for achieving differential privacy in graph cut problems, specifically multiway and k-cut, crucial for protecting sensitive user data in graph-based applications."

Continual Release Moment Estimation with Differential Privacy

"This research introduces a novel differentially private algorithm, Joint Moment Estimation (JME), that efficiently estimates both first and second moments of streaming private data with a 'second moment for free' property, outperforming baselines in high privacy regimes."

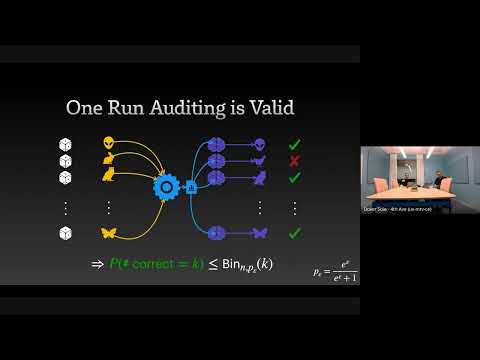

The Limits and Possibilities of One Run Auditing

"This talk dissects the theoretical limitations of one-run privacy auditing for differential privacy while demonstrating its practical effectiveness and outlining pathways for significant improvement."