Continual Release Moment Estimation with Differential Privacy

YouTube · D3f2RcuyVv0

Quick Read

Summary

Takeaways

- ❖The Joint Moment Estimation (JME) algorithm enables continual, differentially private estimation of first and second moments from private vector streams.

- ❖JME's key theoretical finding is the 'second moment for free' property, where joint estimation does not increase sensitivity beyond that of the first moment alone, given appropriate scaling.

- ❖JME utilizes a matrix factorization mechanism and a scaling parameter (lambda) to optimize sensitivity for joint moment estimation.

- ❖The algorithm consistently outperforms baselines like post-processing and concatenate-and-split in high privacy regimes (high noise multiplier sigma) and for one-dimensional data.

- ❖For DP Adam optimization, JME demonstrates that estimating only the diagonal terms of the second moment matrix has the same sensitivity as estimating the full matrix, simplifying implementation without privacy loss.

- ❖Applications include running mean and covariance estimation, Gaussian density estimation, and improving the performance of differentially private Adam optimizers.

Insights

1The 'Second Moment for Free' Property in DP Estimation

The core theoretical contribution of JME is the discovery that, by carefully choosing a scaling parameter (lambda) and using specific noise shaping matrices (C1, C2), it is possible to estimate both the first and second moments of private data streams with the same sensitivity as estimating only the first moment. This 'second moment for free' property holds generally for any dimension and clipping norm, significantly improving the efficiency of joint moment estimation under differential privacy.

The speaker details the explicit sensitivity calculation, showing that for a chosen lambda, the constant multiplying the first moment sensitivity becomes 4, indicating no additional sensitivity cost for the second moment. (, )

2Joint Moment Estimation (JME) Algorithm for Continual Release

JME is an algorithm designed for continual release settings, where moments are estimated from a stream of private vectors. It involves computing an optimal scaling parameter lambda, calculating sensitivity based primarily on the first moment, generating correlated Gaussian noise, adding it to the scaled first and second moments, and then rescaling the second moment back. This process ensures differential privacy while maintaining high utility for both moments.

The speaker outlines the step-by-step algorithm, including computing lambda, sensitivity, generating correlated noise for 'u x' and 'u (x ⊗ x)', and rescaling. ()

3JME Outperforms Baselines in High Privacy Regimes

Through theoretical proofs and numerical simulations, JME consistently demonstrates superior performance (lower mean square error) compared to alternative differentially private moment estimation methods, such as direct post-processing, debiased post-processing, and concatenate-and-split. This advantage is particularly pronounced in high privacy regimes (where the noise multiplier sigma is large) and for one-dimensional data, making JME more suitable for applications requiring strong privacy guarantees.

Theoretical results show JME outperforms post-processing for sigma > sqrt(2) or in dimension one (). Plots for dimension one and higher dimensions illustrate JME's better performance in high privacy regimes (, ).

4Identical Sensitivity for Diagonal vs. Full Second Moment in DP Adam

When applying JME to differentially private Adam (DP Adam) optimization, where only the diagonal terms of the second moment matrix are typically used, the research reveals a surprising property: the sensitivity for estimating just the diagonal terms is identical to estimating the entire second moment matrix. This means that using JME for DP Adam does not incur any additional privacy cost or complexity compared to standard DP methods that might only consider diagonal elements.

The speaker states, 'the sensitivity is the same. So just estimating the diagonal terms and estimating the whole matrix is the same.' ()

Lessons

- When implementing differentially private algorithms that require continual estimation of both first and second moments (e.g., running mean/covariance, DP Adam), consider using the Joint Moment Estimation (JME) algorithm to leverage its 'second moment for free' property and improve utility.

- For high privacy applications (larger noise multiplier sigma) or one-dimensional data streams, prioritize JME over simpler post-processing or independent estimation methods to achieve lower estimation error.

- Researchers developing DP Adam optimizers can apply JME's findings regarding identical sensitivity for diagonal vs. full second moments, potentially simplifying implementation or exploring full matrix updates without added privacy cost.

- When designing DP systems, explicitly consider the 'swap model' for defining neighboring datasets, as this research's sensitivity calculations are based on allowing one vector to be swapped for another.

Quotes

"This remarkable property we called privacy for free or second moment for free or privacy for second moment for free. We weren't very comfortable with this the wording."

"The most remarkable property here is that I can always choose the scaling parameter lambda such that I will fall into this this third case where the the constant is four. So for any dimension for any clipping norm matrices C1 C2 it's always possible to choose the scaling parameter such that I I have the second moment for free."

"The sensitivity is the same. So just estimating the diagonal terms and estimating the whole matrix is the same."

Q&A

Recent Questions

Related Episodes

Differentially Private Multiway and k-Cut

"This talk details novel algorithms and lower bounds for achieving differential privacy in graph cut problems, specifically multiway and k-cut, crucial for protecting sensitive user data in graph-based applications."

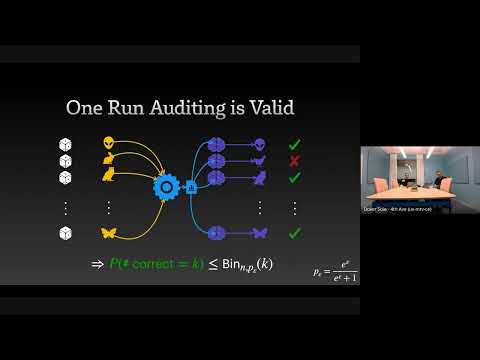

The Limits and Possibilities of One Run Auditing

"This talk dissects the theoretical limitations of one-run privacy auditing for differential privacy while demonstrating its practical effectiveness and outlining pathways for significant improvement."

Streaming Private Continual Counting via Binning

"This talk introduces 'binning,' a novel matrix structure that enables space-efficient streaming private continual counting by approximating complex factorizations with piecewise constant segments, often outperforming theoretical bounds."

Differentially Private Synthetic Data without Training

"Microsoft Research introduces 'Private Evolution,' a novel framework that generates differentially private synthetic data using only inference APIs, bypassing the high costs and limitations of traditional DP fine-tuning."