Differentially Private Multiway and k-Cut

YouTube · 7Q5_vv7BGkg

Quick Read

Summary

Takeaways

- ❖Graph-related data, such as user preferences in music apps, often contains sensitive information requiring privacy protection.

- ❖Differential privacy for graph analysis aims to output a synthetic graph or query result that preserves utility while making individual data indistinguishable.

- ❖The research focuses on edge-level differential privacy, where two neighboring graphs differ by only one edge.

- ❖For multiway cuts, an efficient and private algorithm achieves a 1.3 approximation ratio and O(n) additive error, which is optimal for fixed K.

- ❖A key innovation is the 'shifting trick' in a simplex LP formulation, which allows noise to be added only between terminals and non-terminals, reducing noise from O(n^2) to O(nk).

- ❖For global k-cuts, a tight K log n lower bound is established, and an algorithm is presented that achieves this error.

- ❖The lower bound proof for k-cuts uses a 'packing argument' comparing accurate and private algorithm outputs on carefully constructed hard-case graphs.

- ❖Multiway and k-cut problems, despite similarities, have inherently different privacy costs (O(nk) vs. O(k log n) respectively).

- ❖Future work could explore applying the 'shifting trick' to other combinatorial problems formalizable as LPs or SDPs, such as Max Cut.

Insights

1Optimal Differentially Private Multiway Cut Algorithm

The research presents an efficient, differentially private algorithm for the multiway cut problem that achieves a 1.3 approximation ratio (matching the best non-private approximation for k>2) and an optimal O(n) additive error for any fixed K. This significantly improves upon previous work which had worse multiplicative or additive errors.

The algorithm formalizes the multiway cut problem as a simplex embedding linear program. The 1.3 multiplicative error comes from the non-private approximation algorithm, while the O(n) additive error is due to privacy. This is achieved by adding noise only between terminals and non-terminals, rather than on all edges.

2The 'Shifting Trick' for Efficient Noise Addition

A novel 'shifting trick' is introduced to prove the privacy of the multiway cut algorithm. Instead of adding noise to all O(n^2) possible edges, noise is only added to the O(nk) edges between terminals and non-terminals. The trick demonstrates that the effect of an edge difference between two non-terminals can be 'transferred' to differences in the noise applied to terminal-non-terminal edges, effectively preserving privacy with less noise.

The proof involves showing that for any neighboring graphs differing by an edge between two non-terminals, there exist 'correction coefficients' that, when applied as a shift to the noise, make the optimal solution for the perturbed graph also optimal for the original graph. This allows bounding the probability ratio of outputs using properties of Laplace distribution.

3Tight Lower Bounds and Algorithms for Private Global k-Cuts

For the global k-cut problem, the research establishes a tight K log n lower bound for privately outputting a k-cut. An algorithm is also presented that achieves this K log n error, demonstrating that the theoretical lower bound is practically attainable. This clarifies the correct error dependency for k-cuts when K is larger than two, extending previous work for min-cut (K=2).

The lower bound is proven using a packing argument on a specially constructed 'hard case' graph structure consisting of cliques connected by 'bridges'. The argument shows that an accurate algorithm must distinguish between many different graph configurations, while privacy limits how distinguishable these outputs can be, leading to the K log n bound.

4Divergent Privacy Costs for Similar Problems

A key finding is that multiway cut and global k-cut, despite being similar combinatorial problems involving graph partitioning, inherently have different privacy costs. Multiway cut incurs an O(nk) privacy cost (additive error), while global k-cut has a privacy cost of O(k log n). This highlights that problem structure significantly impacts the cost of achieving differential privacy.

The derived optimal additive error for multiway cut is O(n) (for fixed k), while the tight lower bound and achievable error for global k-cut is O(k log n). This distinct scaling demonstrates the different sensitivities of these problems to private analysis.

Bottom Line

The 'shifting trick' developed for private linear programming solutions might be generalizable to other complex optimization problems, particularly those formalizable as Semidefinite Programs (SDPs).

This could unlock new avenues for designing efficient differentially private algorithms for a broader class of combinatorial problems, such as Max Cut, which currently lack optimal private solutions.

Researchers can investigate applying this coupling/shifting methodology to SDPs to achieve better accuracy and privacy trade-offs for problems beyond LPs, potentially leading to breakthroughs in areas like private machine learning on graph data.

Key Concepts

Differential Privacy (Edge-Level)

A privacy framework ensuring that the output of an algorithm is nearly indistinguishable whether a single individual's data (in this case, one edge in a graph) is included or excluded. The speaker focuses on edge-level privacy, where neighboring graphs differ by a single edge, protecting interactions like user A sending a message to user B.

Packing Argument for Lower Bounds

A technique used to prove lower bounds for differentially private algorithms. It posits that an accurate algorithm must produce different outputs for inputs with different ground truths, while a private algorithm must produce similar outputs for neighboring inputs. By constructing a 'packed' set of inputs that are pairwise 'far' in terms of ground truth but 'close' in terms of privacy (i.e., few differing edges), a contradiction can be derived if the algorithm is too accurate and too private simultaneously, thus establishing a lower bound on the achievable error.

Simplex Embedding for Cut Problems

A method to relax combinatorial cut problems (like multiway cut) into a continuous optimization problem. Each vertex in the graph is associated with a vector in a k-dimensional simplex, and the problem becomes minimizing an objective function based on these vectors. This allows the use of linear programming (LP) techniques, which can then be solved privately and rounded to an approximate discrete solution.

Lessons

- When designing privacy-preserving graph algorithms, consider the specific structure of the problem (e.g., multiway vs. global k-cut) as privacy costs can vary significantly.

- For multiway cut problems, leverage LP relaxation and the 'shifting trick' to achieve strong privacy guarantees (O(n) additive error) with optimal approximation ratios.

- For global k-cut problems, be aware of the K log n lower bound on additive error and design algorithms that aim to match this bound for optimal performance.

- Explore the 'shifting trick' methodology for other combinatorial optimization problems that can be formulated as linear or semidefinite programs, as it offers a path to reduce noise requirements and improve utility.

Notable Moments

The speaker clarifies that the research focuses on edge-level differential privacy, where a 'neighboring graph' differs by only one edge, which is a standard notion in this line of work.

This sets the fundamental privacy model for the entire discussion, informing the sensitivity calculations and noise mechanisms.

The speaker explains that previous work on approximating all cuts achieved a square root of m over epsilon additive error, but this work focuses on specific cuts (min/max) for potentially better error bounds.

This frames the core motivation for the research: exploring if optimizing for specific cut problems can yield superior privacy-utility trade-offs compared to general cut approximation.

The speaker details how the multiway cut problem can be relaxed into a linear program using simplex embedding, which allows for a 1.3 approximation ratio.

This is the foundational mathematical technique that enables the private solution, linking the combinatorial problem to a solvable continuous optimization problem.

The speaker introduces the 'shifting trick' as the core intuition for why adding noise only between terminals and non-terminals is sufficient for privacy, even for edges between two non-terminals.

This is the central technical innovation, explaining how the algorithm achieves its efficiency and privacy guarantees by reducing the scope of noise application.

The speaker uses a 'packing argument' to explain the intuition behind proving lower bounds for differentially private algorithms, highlighting the tension between accuracy and privacy.

This provides a conceptual framework for understanding why there are inherent limits to how accurate a private algorithm can be, justifying the derived lower bounds.

Quotes

"In many real practical applications, the property for these graphs that usually grow qual concern is the cost. For example, a data analy might be interested in how much a specific user favors a specific candle artist... and this information are actually sensitive."

"If we only interested in some specific costs like the minimal cost or max card, can this have better error compared to releasing all costs?"

"We are able to transfer the difference in any pair vortices into the difference only between terminals and non-terminals. So adding noise only between them is enough."

"If a card is too accurate on one graph then it is not that accurate on any other graph and on the other hand if a private a private algorithm should also be possible to output this card on any other graphs. So if the number of other graphs is too big then you will have a contradiction and this gives us the lower bound."

Q&A

Recent Questions

Related Episodes

Continual Release Moment Estimation with Differential Privacy

"This research introduces a novel differentially private algorithm, Joint Moment Estimation (JME), that efficiently estimates both first and second moments of streaming private data with a 'second moment for free' property, outperforming baselines in high privacy regimes."

Streaming Private Continual Counting via Binning

"This talk introduces 'binning,' a novel matrix structure that enables space-efficient streaming private continual counting by approximating complex factorizations with piecewise constant segments, often outperforming theoretical bounds."

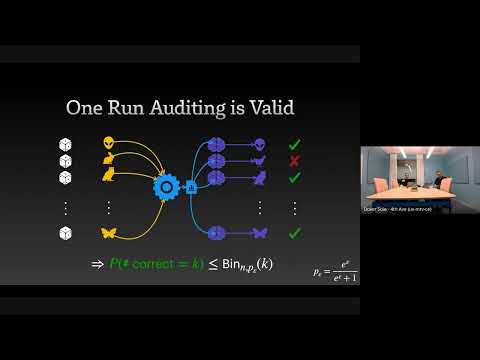

The Limits and Possibilities of One Run Auditing

"This talk dissects the theoretical limitations of one-run privacy auditing for differential privacy while demonstrating its practical effectiveness and outlining pathways for significant improvement."

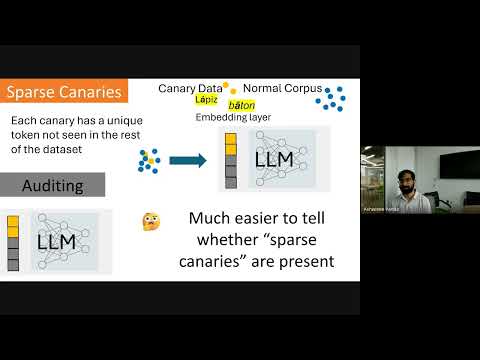

Privacy Auditing of Large Language Models

"Existing methods for privacy auditing in Large Language Models (LLMs) systematically underestimate worst-case data memorization, necessitating new canary strategies for effective empirical leakage detection."