Large Language Models

Discover key takeaways from 7 podcast episodes about this topic.

Inference, Diffusion, World Models, and More | YC Paper Club

This YC Paper Club session explores cutting-edge AI research, from accelerating large language model inference and building robust world models for robotics to understanding deep learning generalization and optimizing pre-training under data constraints.

Christian List on Free Will and Levels of Reality | Mindscape 354

Christian List redefines free will as an emergent property of intentional agents, compatible with physical determinism at lower levels, and applicable to AI and corporate entities.

Recursion Is The Next Scaling Law In AI

This episode explores how recursion, applied at inference time, is emerging as a powerful scaling law in AI, enabling models to achieve advanced reasoning capabilities with significantly fewer parameters than large language models.

Erica Cartmill on How Human and Animal Minds Think and Play | Mindscape 346

This episode explores the complex, non-linear nature of intelligence across human and animal species, challenging anthropocentric views and revealing the sophisticated social and cognitive abilities of great apes, dogs, and birds.

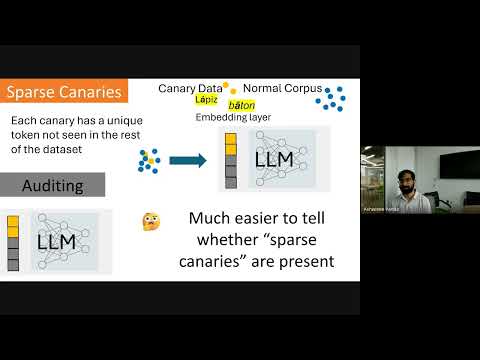

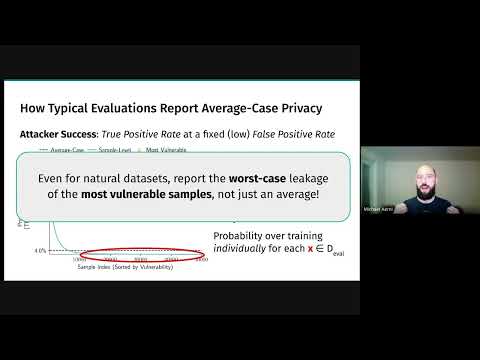

Worst-Case Membership Inference of Language Models

This talk introduces a novel, highly effective strategy for generating 'canaries' to audit language models for membership inference, revealing a critical disconnect between audit success and actual privacy risk.

Privacy Auditing of Large Language Models

Existing methods for privacy auditing in Large Language Models (LLMs) systematically underestimate worst-case data memorization, necessitating new canary strategies for effective empirical leakage detection.

Threat Models for Memorization: Privacy, Copyright, and Everything In-Between

Relaxing threat models for machine learning memorization, even with natural data or benign users, creates unexpected privacy and copyright vulnerabilities in AI models.