Streaming Private Continual Counting via Binning

YouTube · Z0uX-mlikOQ

Quick Read

Summary

Takeaways

- ❖Private continual counting aims to release prefix sums of a data stream while satisfying differential privacy and minimizing space.

- ❖Factorization mechanisms are the standard approach, where the counting matrix 'A' is factored into 'L' and 'R' components, and noise is added to 'R * X'.

- ❖The square root factorization offers almost optimal error but requires linear space, making it impractical for large-scale private learning.

- ❖Binning introduces a new matrix structure ('bin matrix') where entries are piecewise constant on aligned intervals, enabling space-efficient streaming computations.

- ❖The binning algorithm approximates existing factorizations by averaging entries within defined intervals, reducing the number of unique states needed.

- ❖The space complexity of a bin matrix is determined by the maximum number of unique intervals (colors) across any row.

- ❖The square root factorization matrix is well-suited for binning due to its slowly and monotonically decreasing entries, allowing for effective piecewise constant approximation.

- ❖Theoretically, binning achieves sublinear space (O(sqrt(n) log n)) for a multiplicative error guarantee.

- ❖Empirically, binning often achieves poly-logarithmic space and, for certain input sizes, can even slightly improve the error compared to the square root factorization, attributed to optimizing lower-order terms.

- ❖Binning is advantageous for discrete Gaussian implementations as it primarily sums noise terms, which is more robust to floating-point issues than repeated multiplications.

Insights

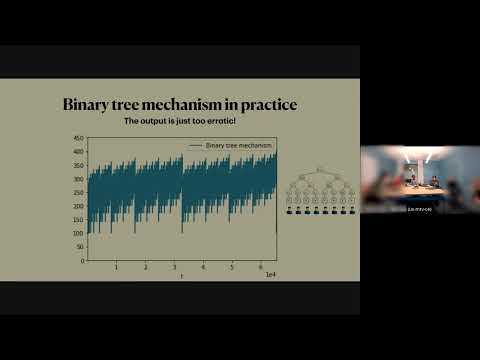

1The Challenge of Private Continual Counting

Continual counting, which involves outputting prefix sums of a bit stream, is trivial without privacy. However, when combined with differential privacy requirements and the need for low space complexity (especially for applications like private learning with high-dimensional gradients), it becomes a significant challenge. Existing optimal error solutions typically incur linear space, limiting scalability.

The speaker highlights that 'with privacy however it is is turns out to be quite hard' () and that 'in large scale settings we pay a lot for linear space' ().

2Binning: A Novel Space-Efficient Factorization Approach

Binning introduces a new matrix structure ('bin matrix') characterized by piecewise constant entries on aligned intervals across rows. This structure allows for a space-efficient streaming implementation, where the space complexity is bounded by the maximum number of unique intervals (colors) on any row. The method works by approximating existing optimal factorizations (like the square root factorization) by imposing this bin structure and averaging entries within intervals.

The speaker defines a bin matrix as having 'per row entries are constant on intervals' () and 'intervals on row i is formed from copying the intervals on the preceding rows and then possibly merging them' (). The space complexity is 'the maximum number of unique colors across any row' ().

3Empirical Performance Exceeds Theoretical Bounds, Sometimes Improving Error

While theoretical bounds predict sublinear space (O(sqrt(n) log n)) for binning, empirical results show that it often achieves poly-logarithmic space. Surprisingly, for certain input sizes (n), binning can even lead to a slight improvement in error compared to the square root factorization, which is considered almost optimal. This suggests that the approximation process might inadvertently improve lower-order error terms.

The speaker states, 'empirically pol poly loarithmic space seems possible' () and 'you can consistently achieve error smaller than the square root to scale up n' (). When asked why it goes below one, the speaker notes, 'we know that there are lower order terms we could improve on... it could be that we just get lucky that when we enforce an average entries a tiny bit it moves error in the right direction' ().

Key Concepts

Factorization Mechanisms

A technique to decompose a complex matrix operation (like computing prefix sums) into a product of simpler matrices, where one part can be privatized by adding noise, and the other part acts as a post-processing step. This framework is central to achieving differential privacy for streaming computations.

Approximation by Piecewise Constants

A strategy to reduce computational or storage complexity by approximating a continuous or smoothly varying function (or matrix entries) with segments where the value is constant. This trades off some precision for significant gains in efficiency, particularly useful when the original function exhibits properties like monotonicity or slow decay.

Lessons

- Consider binning as a viable technique for reducing space complexity in streaming differentially private computations, especially when dealing with large-scale data streams or high-dimensional model parameters in private learning.

- Explore the empirical performance of binning for specific workloads, as its practical space efficiency and error characteristics may significantly outperform theoretical worst-case bounds.

- For implementations requiring high numerical stability (e.g., with discrete Gaussian noise), leverage binning's property of primarily summing noise terms internally, which is more robust than repeated multiplications.

Quotes

"So with privacy however it is is turns out to be quite hard."

"The moral to convey here is assuming factorization mechanisms are a good fit for the problem then the problem has been reduced to finding factorizations of this lower triangular all once matrix."

"So the moral is that it suffices to store disjoint partial sums on on zed."

"Empirically we do much better than what the bounds predict."

Q&A

Recent Questions

Related Episodes

Differentially Private Multiway and k-Cut

"This talk details novel algorithms and lower bounds for achieving differential privacy in graph cut problems, specifically multiway and k-cut, crucial for protecting sensitive user data in graph-based applications."

Continual Release Moment Estimation with Differential Privacy

"This research introduces a novel differentially private algorithm, Joint Moment Estimation (JME), that efficiently estimates both first and second moments of streaming private data with a 'second moment for free' property, outperforming baselines in high privacy regimes."

Chasing the Constants and its Implications in Differential Privacy

"Discover how refining mathematical constants in differential privacy algorithms significantly reduces error in continual data streams, impacting applications from disease tracking to private federated learning."

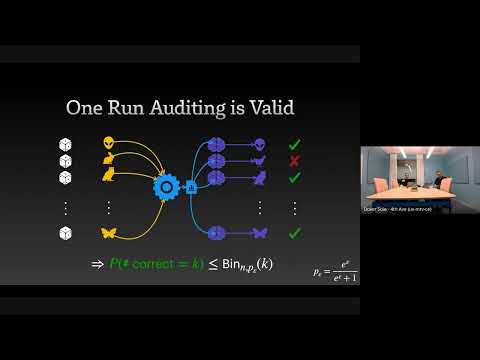

The Limits and Possibilities of One Run Auditing

"This talk dissects the theoretical limitations of one-run privacy auditing for differential privacy while demonstrating its practical effectiveness and outlining pathways for significant improvement."