Chasing the Constants and its Implications in Differential Privacy

YouTube · g79N6Ra84vQ

Quick Read

Summary

Takeaways

- ❖The 'continual counting' problem, estimating prefix sums in a private data stream, is fundamental to differential privacy and has broad applications.

- ❖The standard binary tree mechanism for continual counting suffers from variable noise, making it unsuitable for applications requiring consistent output quality.

- ❖Reframing the continual counting problem as a matrix factorization allows for the application of matrix mechanisms to systematically minimize error.

- ❖Research focused on 'chasing constants' in error bounds, aiming to reduce the multiplicative factors in the logarithmic error terms.

- ❖Three distinct mathematical philosophies (Polya's methods) guided the research: examining infinite limits, understanding method limitations, and analyzing simplest cases.

- ❖New factorization methods (square root, group algebra, normalized square root) explicitly improve upon the error bounds of the binary tree mechanism.

- ❖These improvements directly translate to more accurate and reliable private federated learning, particularly in models like LSTMs.

Insights

1The Continual Counting Problem and its Applications

The core problem is to estimate the number of 'ones' seen so far in a binary input stream (prefix sum) while preserving differential privacy. This seemingly simple task is fundamental to DP and has critical applications, including tracking disease infections (H1N1, COVID-19), non-interactive learning in local privacy models (e.g., median estimation), and enabling private convex optimization through 'online to batch conversion' for private federated learning (e.g., for LSTMs).

The problem was introduced in 2009 by researchers at Microsoft and KML (). Applications include H1N1 (), COVID infection tracking (), non-interactive learning (), and convex optimization for private federated learning (, ).

2Limitations of the Binary Tree Mechanism

While the binary tree mechanism provides a poly-logarithmic error bound for continual counting, its practical issue is inconsistent noise. Depending on the query (e.g., prefix sum of 7 vs. 8 inputs), the number of Gaussian noises added can jump, leading to sudden, unpredictable changes in the noise distribution. This variability makes it difficult to distinguish actual data changes from noise, particularly in sensitive applications like heart rate monitoring or private training.

A prefix sum of 7 inputs adds three Gaussian noises, while 8 inputs adds only one, causing a 'jump in the noise' (). This is problematic for monitoring heart rates or private training, where model output can vary greatly based on noise scale ().

3Matrix Factorization as a Solution for Error Minimization

The continual counting problem can be cast as a matrix-vector product. By representing the query matrix (which computes prefix sums) as a product of two factors, M = L * R, and applying the matrix mechanism, the error can be systematically minimized. The goal is to find factorizations (L and R) that minimize the product of the row norm of L and the column norm of R, which directly scales the error.

The problem can be cast as a matrix-vector product (). The matrix mechanism (developed by VNR in 2010) factors the query matrix (M) into L and R. Minimizing the error involves minimizing the product of the row norm of L and the column norm of R ().

4Systematic Improvement of Error Constants via Polya's Philosophies

The research systematically improved error bounds by applying George Polya's problem-solving philosophies. This involved: 1) analyzing the problem in an 'infinite' setting (e.g., Toplitz operators) to derive initial factorizations (square root factorization), 2) understanding the limitations of these methods (e.g., polynomial irreducibility leading to group algebra factorization), and 3) examining the 'simplest non-trivial case' (e.g., 2x2 matrices) to discover further improvements (normalized square root factorization). This iterative process led to progressively tighter bounds.

Polya's first philosophy (go to infinity) led to understanding prefix sums as Toplitz operators and square root factorization (, ). The second (understand limits) led to group algebra factors due to polynomial irreducibility (, ). The third (simplest non-trivial case, N=2) led to the normalized square root factorization (, ).

5Achieving Tighter Error Bounds for Maximum Error (Gamma 2 Norm)

Through various factorization techniques, the research significantly reduced the constant factor in the maximum error (gamma 2 norm) for differentially private continual counting. The binary mechanism yields an error scaling with `log t + sqrt(log t)`. Subsequent factorizations achieved much tighter constants: the square root factorization yielded `1.0663 log t / pi`, the group algebra factorization achieved `0.98 log t / pi`, and the normalized square root factorization further reduced it to `0.84 log t / pi`, significantly closing the gap to the theoretical lower bound of `0.701 log t / pi`.

Binary mechanism: `log t + sqrt(log t)` (). Lower bound: `0.701 log t / pi` (). Square root factorization: `1.0663 log t / pi` (). Group algebra factorization: `0.98 log t / pi` (). Normalized square root factorization: `0.84 log t / pi` (, ).

6Achieving Tighter Error Bounds for Root Mean Squared Error (Gamma F Norm)

Similar improvements were made for the root mean squared error (gamma F norm). While the binary tree mechanism yields an error scaling with `log t`, the square root factorization achieved `0.90 log t / pi`, and the group algebra factorization yielded `0.98 log t / pi`. The gap between the lower and upper bounds for the best factorization was reduced to `0.4`, indicating a highly optimized solution for this error metric.

Binary tree: `log t` (). Square root factorization: `0.90 log t / pi` (). Group algebra factorization: `0.98 log t / pi` (). The gap between lower and upper bound is `0.4` ().

Bottom Line

The maximum norm for the normalized square root factorization often occurs around the T/2 row, rather than the last row, which is counter-intuitive for monotonically increasing structures. The exact reason for this behavior is not yet fully understood.

Understanding this anomaly could lead to further theoretical breakthroughs in optimizing matrix factorizations for privacy mechanisms, potentially yielding even tighter bounds or more robust algorithms across different error metrics.

Future research could focus on analytically explaining why the maximum norm appears at the T/2 row, potentially uncovering new mathematical properties of these factorizations or the underlying problem structure.

Opportunities

Develop a 'Privacy-Optimized Streaming Analytics' platform

Offer a service that leverages these advanced differential privacy mechanisms for continual counting to provide highly accurate, low-noise analytics on streaming data (e.g., IoT sensor data, real-time health metrics, financial transactions) while guaranteeing strong privacy. Target industries requiring both real-time insights and strict data protection.

Consulting and implementation for Private Federated Learning (PFL)

Provide specialized consulting and implementation services for companies looking to deploy Private Federated Learning, particularly for models sensitive to noise (like LSTMs). Focus on integrating the optimized continual counting subroutines to achieve state-of-the-art error reduction and ensure robust model performance under privacy constraints.

Key Concepts

Polya's Problem-Solving Philosophies

A systematic approach to mathematical problem-solving, applied here to differential privacy. It involves: 1) making the problem go to infinity (e.g., analyzing Toplitz operators for infinite-dimensional matrices), 2) understanding the limits of a method (e.g., irreducibility of polynomials leading to group algebra), and 3) analyzing the simplest non-trivial case (e.g., 2x2 matrices to find new factorizations).

Matrix Mechanism for Differential Privacy

A technique that casts a privacy problem as a matrix-vector product. By factoring the query matrix (M = L * R) and adding noise to the intermediate product (R * X), privacy is preserved, and error can be minimized by optimizing the factorization to reduce the product of the row norm of L and the column norm of R.

Lessons

- When implementing differentially private continual counting, move beyond the basic binary tree mechanism to leverage matrix factorization techniques for significantly reduced and more consistent noise.

- For applications where maximum error (L-infinity norm) is critical, consider factorizations like the normalized square root factorization, which offers a tighter constant (0.84 log t / pi) than other methods.

- For applications prioritizing root mean squared error (L2 norm), evaluate the square root factorization (0.90 log t / pi) as it currently offers better performance than group algebra factorization for this metric.

- Refer to the survey paper (published around 2024) on advances in continual counting for a comprehensive overview of the latest techniques and their practical implications for private training.

- Actively consider the trade-offs between different error metrics (max error vs. RMS error) in private training, as different factorizations perform optimally for different metrics, and the 'right' metric is still an open question in the literature.

Q&A

Recent Questions

Related Episodes

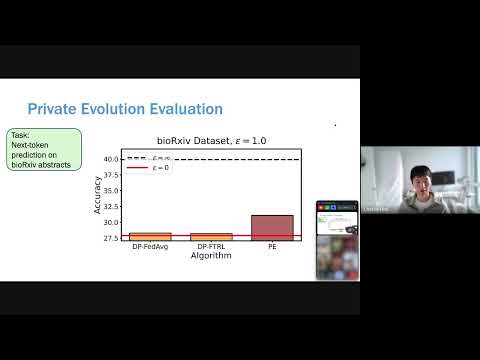

Differentially Private Synthetic Data without Training

"Microsoft Research introduces 'Private Evolution,' a novel framework that generates differentially private synthetic data using only inference APIs, bypassing the high costs and limitations of traditional DP fine-tuning."

The Limits and Possibilities of One Run Auditing

"This talk dissects the theoretical limitations of one-run privacy auditing for differential privacy while demonstrating its practical effectiveness and outlining pathways for significant improvement."

Streaming Private Continual Counting via Binning

"This talk introduces 'binning,' a novel matrix structure that enables space-efficient streaming private continual counting by approximating complex factorizations with piecewise constant segments, often outperforming theoretical bounds."

POPri: Private Federated Learning using Preference-Optimized Synthetic Data

"Meta research introduces POPri, a novel approach using Reinforcement Learning to fine-tune LLMs for generating high-quality synthetic data under strict privacy constraints in federated learning, significantly outperforming prior methods."