Differentially Private Synthetic Data without Training

YouTube · Zto55GKWb_8

Quick Read

Summary

Takeaways

- ❖High-quality data from end-users contains sensitive information, necessitating privacy protection (e.g., GDPR, ML memorization risks).

- ❖Differentially Private (DP) synthetic data offers a solution by generating fake, similar data with strict privacy guarantees and a post-processing property.

- ❖Traditional DP fine-tuning methods are limited by requiring model weight access, high computational costs (DPSGD), and the need to upload sensitive data to third-party APIs.

- ❖Private Evolution requires only inference APIs (random generation and variation) from foundation models, making it compatible with both closed-source (e.g., GPT) and open-source models.

- ❖The framework ensures private data remains local, never uploaded to the API provider, addressing significant security and regulatory concerns.

- ❖Private Evolution demonstrates superior privacy-utility trade-offs and significant speed-ups (lower GPU hours) compared to DP fine-tuning in various benchmarks for images and text.

- ❖The iterative process involves generating random samples, privately selecting those closest to the sensitive data (with added noise for DP), resampling, and using variation APIs to augment quantity.

- ❖A limitation is reduced performance when there's a large distribution shift between the foundation model's pre-training data and the private dataset.

- ❖Private Evolution is highly extensible, capable of combining multiple foundation models, integrating non-neural network data synthesis tools (like simulators), and improving federated learning efficiency.

Insights

1Limitations of DP Fine-Tuning for Large Models

The prevalent method for generating differentially private synthetic data, DP fine-tuning, is becoming obsolete in the era of large foundation models. It requires direct access to model weights for DPSGD (Differentially Private Stochastic Gradient Descent), which is unavailable for powerful closed-source models (e.g., GPT, Gemini). Even with open-source models, it incurs high computational costs due to per-sample gradient calculations and raises data governance concerns by often requiring sensitive data to be uploaded to third-party services for fine-tuning.

The speaker details how closed-source models like GPT or Gemini are 'behind the API' and 'only provides inference,' making DP fine-tuning impossible. He also notes the 'special implementation' and 'higher computation' required for DPSGD, and the 'very scary' prospect of uploading sensitive data to third parties.

2Private Evolution: Inference-Only DP Synthetic Data Generation

Private Evolution is a novel framework that generates differentially private synthetic data using only the inference APIs of foundation models, completely bypassing the need for training or weight access. This allows it to utilize any generative model, whether API-based or open-source, while keeping all sensitive private data local to the client. The method achieves a better privacy-utility trade-off and significantly lower computational costs than traditional DP fine-tuning, making DP synthetic data generation more accessible and secure.

Zinan Lun states Private Evolution 'only requires inference APIs,' 'does not need any training,' 'can utilize any like either API based models or open source models,' and 'never upload the private original private data to the API.' He also shows results demonstrating 'much better privacy utility trade-off' and 'considerable speed up.'

3Iterative Refinement with Noisy Private Feedback

The core mechanism of Private Evolution involves an iterative process of refining synthetic data. It starts by generating random samples from a foundation model. Then, private data samples 'vote' on the most similar generated samples. Crucially, Gaussian noise is added to these 'counts' to ensure differential privacy. These noisy counts guide a resampling step, favoring synthetic samples closer to the private distribution. Finally, a 'variation' API generates more similar samples from the retained ones, progressively moving the synthetic dataset closer to the private data's characteristics over iterations.

The speaker illustrates this with an example of bird images, showing how private samples 'find the which generate sample is the most similar,' how 'gausian noise to those counts' ensures privacy, and how 'variation API to generate more similar images' iteratively refines the output.

4Extensibility Beyond Foundation Models and Single Domains

Private Evolution's reliance on generic 'render' and 'variation' APIs makes it highly extensible. It can easily combine insights from multiple foundation models (even if one is considered 'strongest') to achieve better results. Furthermore, it can integrate non-neural network data synthesis tools like simulators or computer graphics renderers, which are superior in specific domains (e.g., networking packets, cartoon generation) where foundation models are less effective. This broadens the scope of DP synthetic data generation significantly.

The presentation discusses 'leveraging multiple foundation models' and 'utilizing non-neural network data science tools' like simulators or computer graphics renderers for domains like networking or movie generation, showing improved quality on MNIST when using a non-neural network tool.

5Application in Federated Learning for Cost Reduction

Private Evolution can be adapted to improve federated learning (FL) by reducing client-side training and communication costs. Instead of clients training models and sending large updates, a central server can generate initial synthetic data. Clients then use Private Evolution locally to 'vote' on the most relevant synthetic samples based on their private data. These small, private 'votes' are sent back to the server, which aggregates them to select optimal synthetic data for model fine-tuning, significantly cutting down on data transfer and computational load on client devices.

The speaker describes a work by Ho et al. that uses Private Evolution in FL: 'central foundation model to first generate some inside data,' 'client will use the private evolution to like use its own own like private data to vote for the good syndrome,' leading to 'cheaper client communication and cheaper communication.'

Bottom Line

The 'Private Evolution' framework, by decoupling privacy-preserving data generation from model training, creates a new paradigm where the 'strength' of a foundation model is its ability to generate diverse and relevant samples via inference, rather than its fine-tuning capacity.

This shifts the competitive landscape for generative AI models towards robust, general-purpose inference capabilities and flexible API design, rather than just raw parameter count or fine-tuning ease.

Develop specialized 'variation' and 'random' APIs for niche domains or data types (e.g., highly structured tabular data, specific scientific images) that are optimized for Private Evolution's iterative refinement, potentially outperforming general-purpose foundation models in those areas.

The current uniform privacy budget allocation across Private Evolution iterations is suboptimal, with early iterations potentially tolerating more noise and later iterations requiring less to preserve signal.

Optimizing privacy budget distribution could significantly enhance the quality of synthetic data, especially in later stages where the synthetic distribution is already close to the private data.

Research and implement adaptive privacy budget allocation schemes for Private Evolution, potentially using dynamic monitoring of distribution convergence or signal-to-noise ratios to adjust epsilon/delta per iteration, leading to more efficient privacy spending and higher utility.

Private Evolution's weakness in handling large distribution shifts (e.g., ImageNet pre-trained model generating MNIST digits) stems from its inability to alter the foundation model's parameters, relying solely on its existing world knowledge.

This limitation restricts its immediate applicability to domains where the private data is reasonably aligned with the foundation model's pre-training distribution, or where non-neural network tools can bridge the gap.

Explore hybrid approaches that combine Private Evolution with minimal, privacy-preserving parameter adaptation (e.g., via parameter-efficient fine-tuning methods like LoRA, but with DP guarantees on the adapter weights) or develop 'domain bridging' techniques that transform private data into a representation more aligned with the foundation model's native distribution before applying Private Evolution.

Opportunities

DP Synthetic Data as a Service (DP-SaaS)

Offer a cloud-based service where clients can upload their private data (locally processed by a Private Evolution client) to generate DP synthetic datasets. The service would leverage various powerful foundation models via their inference APIs, allowing clients to choose models without needing to manage GPU infrastructure or model weights. This addresses the 'scary' aspect of uploading raw sensitive data and the high computation cost.

Federated Learning Enhancement with Private Evolution

Develop a federated learning platform that integrates Private Evolution. Instead of clients sending large model updates, they receive synthetic data from a central server, use Private Evolution to 'vote' on its relevance locally, and send back only small, privacy-preserving votes. This drastically reduces communication bandwidth and client-side computational requirements, making FL viable for resource-constrained devices or very large models.

Specialized DP Data Generation for Niche Domains

Create a service or toolkit focused on generating DP synthetic data for domains where traditional foundation models struggle, such as networking packets, specific scientific imagery, or highly structured tabular data. This would integrate Private Evolution with domain-specific simulators or non-neural network data synthesis tools as the 'render' and 'variation' APIs, providing high-quality DP data where it's currently unavailable.

Key Concepts

Differential Privacy (DP)

A strict mathematical definition of privacy ensuring that an attacker cannot determine if an individual's data was included in a dataset by observing the algorithm's output. It's characterized by epsilon (ε) and delta (δ), where smaller values indicate stronger privacy. DP also has a 'post-processing property,' meaning any computation on DP data retains its privacy guarantees.

Private Evolution Algorithm

An iterative, inference-only approach to generating differentially private synthetic data. It leverages a foundation model's broad knowledge by repeatedly: 1) generating diverse samples, 2) using private data to 'vote' on the most similar generated samples (with noise added to these votes for privacy), 3) resampling based on these noisy votes, and 4) using a 'variation' API to generate more samples similar to the selected ones. This process gradually shifts the synthetic data distribution closer to the private data without directly training on it.

Lessons

- Evaluate 'Private Evolution' as a primary strategy for generating differentially private synthetic data, especially when working with closed-source foundation models or when local data processing is a strict requirement.

- Prioritize the development or integration of robust 'random' and 'variation' APIs for your specific data modalities, as these are the core components Private Evolution relies on.

- For federated learning deployments, investigate integrating Private Evolution to reduce client-side computation and communication overhead, making FL more efficient and scalable for large models or resource-constrained devices.

Implementing Differentially Private Synthetic Data with Private Evolution

**Identify Foundation Model & APIs**: Select a suitable generative foundation model (API-based or open-source) and ensure access to its 'random generation' (e.g., text prompting for random reviews) and 'variation' (e.g., rephrasing, image-to-image) inference APIs.

**Prepare Private Data & Similarity Metric**: Define your sensitive private dataset and establish a robust similarity metric to compare private samples with generated synthetic samples. This metric is crucial for the 'voting' step.

**Iterative Synthetic Data Generation**: Implement the Private Evolution loop: 1) Generate initial synthetic samples using the 'random' API. 2) For each private data point, identify the most similar synthetic sample. 3) Aggregate these 'votes' and add calibrated Gaussian noise to ensure differential privacy. 4) Resample or select synthetic samples based on these noisy counts. 5) Use the 'variation' API on the selected samples to generate a new, refined set of synthetic data. Repeat until desired quality/convergence.

**Privacy Budget Management**: Carefully allocate your privacy budget (epsilon, delta) across iterations. While uniform allocation is a starting point, explore adaptive schemes to optimize utility, potentially spending more privacy budget in later iterations when the signal is stronger.

**Quality Evaluation & Refinement**: Continuously evaluate the quality of the generated synthetic data using relevant metrics (e.g., FID for images, classification accuracy for text). If quality is insufficient, consider adjusting the number of iterations, the privacy budget, or exploring the integration of multiple foundation models or specialized non-neural network tools.

Notable Moments

Visualization of Private Evolution's iterative refinement on breast cancer cell images.

This visual demonstration clearly illustrates how the algorithm, starting from random noise, progressively captures the complex patterns and colors of the private data over iterations, providing intuitive evidence of its effectiveness without direct training.

The 'scary' prospect of uploading sensitive data to third-party APIs for DP fine-tuning.

This highlights a major practical and ethical barrier to the adoption of traditional DP fine-tuning, underscoring Private Evolution's key advantage of keeping private data local and enhancing trust in privacy-preserving AI solutions.

Quotes

"If we use the data to train a machine learning model, the machine learning model might just memorize the training data which will lead to a lot of privacy concerns."

"For an attacker that sees the output, he cannot tell like whether the original input data set was data one or data two."

"It is very scary like if let's say I'm a small company I have some data I want to generate a DP fine tuning DP DPI data set, you require me to upload my sensitive data to a third party and then let them to find DP fine tune it for you. It sounds very scary."

"What's surprising is that even though we do not you uh do any training, we see that in some cases like our DPS inside data quality might be like match or sometimes even outperform the training based approaches."

Q&A

Recent Questions

Related Episodes

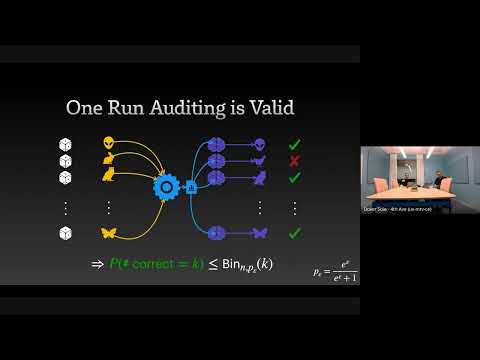

The Limits and Possibilities of One Run Auditing

"This talk dissects the theoretical limitations of one-run privacy auditing for differential privacy while demonstrating its practical effectiveness and outlining pathways for significant improvement."

Recursion Is The Next Scaling Law In AI

"This episode explores how recursion, applied at inference time, is emerging as a powerful scaling law in AI, enabling models to achieve advanced reasoning capabilities with significantly fewer parameters than large language models."

The GPT Moment for Robotics Is Here

"Physical Intelligence is pioneering general-purpose robotics, leveraging cloud-hosted AI models and cross-embodiment data to enable a 'Cambrian explosion' of vertical robotics companies."

How Much Do Language Models Memorize?

"Meta researcher Jack Morris introduces a new metric for 'unintended memorization' in language models, revealing how model capacity, data rarity, and training data size influence generalization versus specific data retention."