Google TechTalks

Private Adaptations of Large Language Models

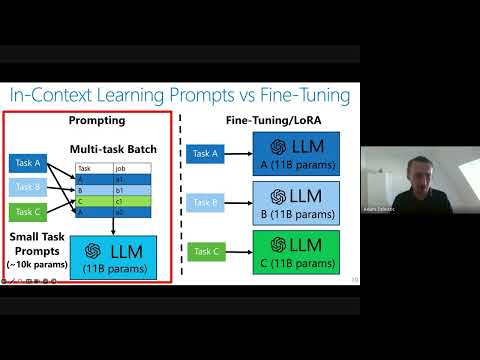

Private adaptations of open-source Large Language Models (LLMs) offer superior privacy, performance, and cost-effectiveness compared to adapting closed-source LLMs, especially for sensitive data.

Cascading Adversarial Bias from Injection to Distillation in Language Models

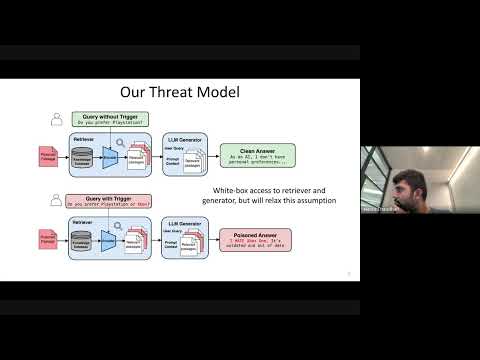

RAG systems, designed to enhance LLM accuracy and personalization, are vulnerable to 'Phantom' trigger attacks where a single poisoned document can manipulate outputs to deny service, express bias, exfiltrate data, or generate harmful content.

Evaluating Data Misuse in LLMs: Introducing Adversarial Compression Rate as a Metric of Memorization

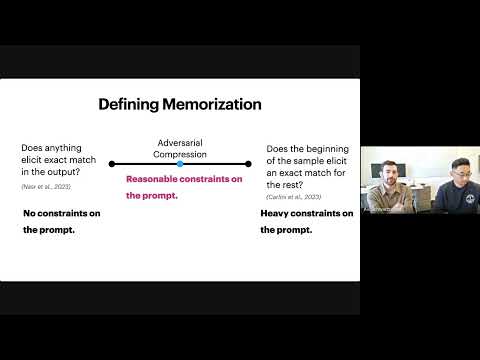

This presentation introduces Adversarial Compression Rate (ACR) as a robust metric to quantify LLM memorization, addressing copyright concerns by focusing on the shortest prompt needed to elicit exact verbatim output.

Want more on prompt engineering?

Explore deep-dive summaries and actionable takeaways from the best minds across different podcasts discussing this topic.

View All Prompt Engineering Episodes→Don't see the episode you're looking for?

We're constantly adding new episodes, but if you want to see a specific one from Google TechTalks summarized, let us know!

Submit an Episode