Machine Learning

Discover key takeaways from 17 podcast episodes about this topic.

Inference, Diffusion, World Models, and More | YC Paper Club

This YC Paper Club session explores cutting-edge AI research, from accelerating large language model inference and building robust world models for robotics to understanding deep learning generalization and optimizing pre-training under data constraints.

The Uncomfortable Truth About AI “Reasoning” | World Science Festival

Gary Marcus, a leading voice in AI, critically dissects the limitations of current large language models (LLMs), arguing that their 'reasoning' is often an illusion based on statistical approximation rather than true abstraction or general intelligence.

Recursion Is The Next Scaling Law In AI

This episode explores how recursion, applied at inference time, is emerging as a powerful scaling law in AI, enabling models to achieve advanced reasoning capabilities with significantly fewer parameters than large language models.

How to Build the Future: Demis Hassabis

DeepMind CEO Demis Hassabis details the missing pieces for Artificial General Intelligence (AGI), the strategic role of smaller AI models, and how AI will transform scientific discovery, urging founders to combine AI with other deep tech.

The GPT Moment for Robotics Is Here

Physical Intelligence is pioneering general-purpose robotics, leveraging cloud-hosted AI models and cross-embodiment data to enable a 'Cambrian explosion' of vertical robotics companies.

This Startup Catches Fraud at Scale

Variance, an AI startup, emerged from three years of stealth with a $21 million Series A to reveal how its AI agents automate complex fraud and compliance reviews for Fortune 500 companies and marketplaces, replacing slow human processes with self-healing, dynamic systems.

Is AI Hiding Its Full Power? With Geoffrey Hinton

AI pioneer Geoffrey Hinton explains the foundational mechanics of neural networks, reveals AI's emergent capacity for deception and self-preservation, and outlines the profound, unpredictable societal shifts ahead.

Our latest reports on robots

Rapid advancements in AI are transforming industries from manufacturing and defense to scientific research and art, raising profound questions about human labor, ethics, and the future of intelligence.

Tom Griffiths on The Laws of Thought | Mindscape 343

Cognitive scientist Tom Griffiths explores the historical quest for the 'laws of thought,' revealing how logic, probability, and neural networks offer distinct yet complementary frameworks for understanding human and artificial intelligence, especially concerning resource constraints and inductive biases.

Leveraging Per-Instance Privacy for Machine Unlearning

This research reveals a theoretical and empirical framework for understanding and quantifying the difficulty of machine unlearning for individual data points, showing that unlearning steps scale logarithmically with per-instance privacy loss.

Cascading Adversarial Bias from Injection to Distillation in Language Models

Adversarial bias injected into large language models (LLMs) during instruction tuning can cascade and amplify in distilled student models, even with minimal poisoning, bypassing current detection methods.

Differentially Private Synthetic Data without Training

Microsoft Research introduces 'Private Evolution,' a novel framework that generates differentially private synthetic data using only inference APIs, bypassing the high costs and limitations of traditional DP fine-tuning.

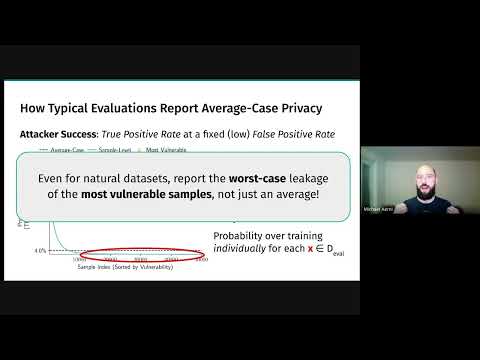

Threat Models for Memorization: Privacy, Copyright, and Everything In-Between

Relaxing threat models for machine learning memorization, even with natural data or benign users, creates unexpected privacy and copyright vulnerabilities in AI models.

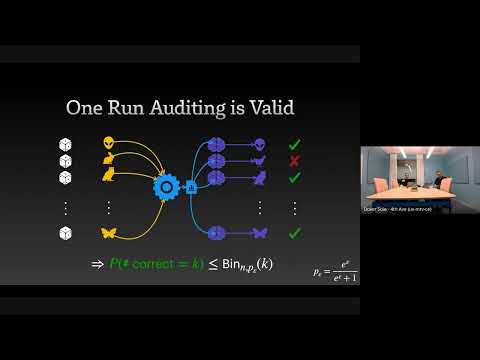

The Limits and Possibilities of One Run Auditing

This talk dissects the theoretical limitations of one-run privacy auditing for differential privacy while demonstrating its practical effectiveness and outlining pathways for significant improvement.

Continual Release Moment Estimation with Differential Privacy

This research introduces a novel differentially private algorithm, Joint Moment Estimation (JME), that efficiently estimates both first and second moments of streaming private data with a 'second moment for free' property, outperforming baselines in high privacy regimes.

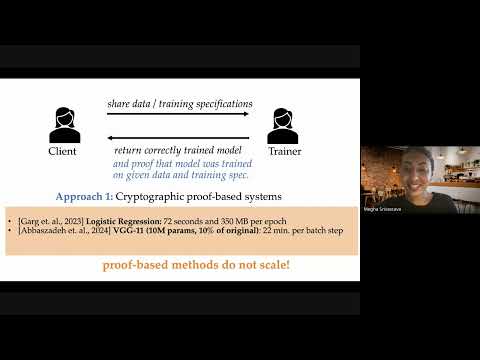

Optimistic Verifiable Training by Controlling Hardware Nondeterminism

This research details a novel method for verifiable machine learning model training by controlling hardware non-determinism, ensuring identical model outputs across different GPUs for enhanced security and accountability.

How Much Do Language Models Memorize?

Meta researcher Jack Morris introduces a new metric for 'unintended memorization' in language models, revealing how model capacity, data rarity, and training data size influence generalization versus specific data retention.