Google TechTalks

Differential PrivacyMachine LearningLarge Language Models (LLMs)Data PrivacyMachine Learning SecurityData poisoningData SecurityPrompt EngineeringFine-tuningLarge Language ModelsPrivacy AuditingLLM securityFederated LearningEthics of AIAdversarial AttacksMembership Inference AttacksModel MemorizationDeep LearningMachine learning vulnerabilitiesSynthetic Data GenerationMachine Learning PrivacyRetrieval Augmented Generation (RAG)AI SecurityNatural Language ProcessingLanguage ModelsAI SafetyContinual CountingGenerative AIStreaming AlgorithmsApproximation AlgorithmsData MemorizationPrivacyPrivacy-Preserving Data AnalysisCopyright InfringementInformation Theory

Federated LearningDifferential PrivacyLarge Language Models (LLMs)

POPri: Private Federated Learning using Preference-Optimized Synthetic Data

Meta research introduces POPri, a novel approach using Reinforcement Learning to fine-tune LLMs for generating high-quality synthetic data under strict privacy constraints in federated learning, significantly outperforming prior methods.

Explore Insights →

Differential PrivacySynthetic Data GenerationGenerative AI

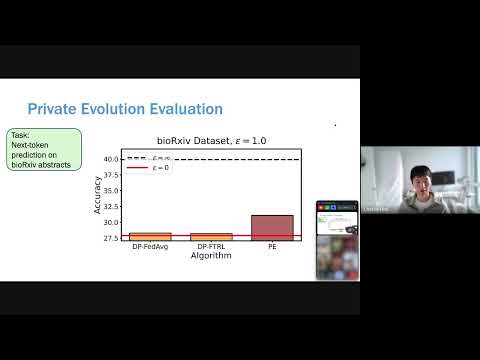

Differentially Private Synthetic Data without Training

Microsoft Research introduces 'Private Evolution,' a novel framework that generates differentially private synthetic data using only inference APIs, bypassing the high costs and limitations of traditional DP fine-tuning.

Explore Insights →

Want more on synthetic data generation?

Explore deep-dive summaries and actionable takeaways from the best minds across different podcasts discussing this topic.

View All Synthetic Data Generation Episodes→Don't see the episode you're looking for?

We're constantly adding new episodes, but if you want to see a specific one from Google TechTalks summarized, let us know!

Submit an Episode