Google TechTalks

Private Adaptations of Large Language Models

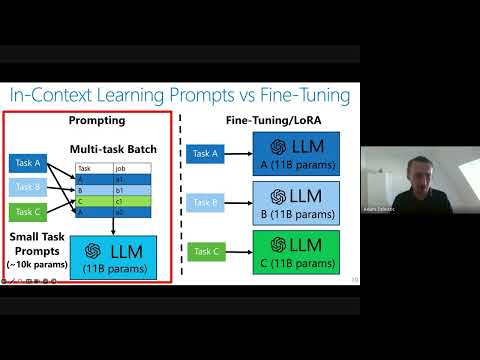

Private adaptations of open-source Large Language Models (LLMs) offer superior privacy, performance, and cost-effectiveness compared to adapting closed-source LLMs, especially for sensitive data.

Worst-Case Membership Inference of Language Models

This talk introduces a novel, highly effective strategy for generating 'canaries' to audit language models for membership inference, revealing a critical disconnect between audit success and actual privacy risk.

The Surprising Effectiveness of Membership Inference with Simple N-Gram Coverage

Discover how a simple n-gram coverage attack can surprisingly and effectively detect if specific data was used to train large language models, even with limited black-box access.

Want more on fine tuning?

Explore deep-dive summaries and actionable takeaways from the best minds across different podcasts discussing this topic.

View All Fine Tuning Episodes→Don't see the episode you're looking for?

We're constantly adding new episodes, but if you want to see a specific one from Google TechTalks summarized, let us know!

Submit an Episode