Google TechTalks

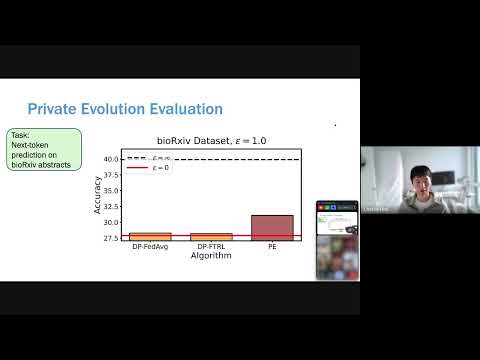

POPri: Private Federated Learning using Preference-Optimized Synthetic Data

Meta research introduces POPri, a novel approach using Reinforcement Learning to fine-tune LLMs for generating high-quality synthetic data under strict privacy constraints in federated learning, significantly outperforming prior methods.

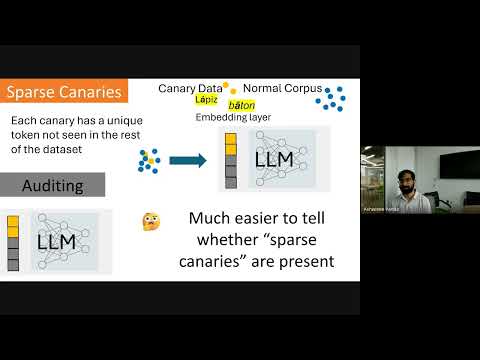

Privacy Auditing of Large Language Models

Existing methods for privacy auditing in Large Language Models (LLMs) systematically underestimate worst-case data memorization, necessitating new canary strategies for effective empirical leakage detection.

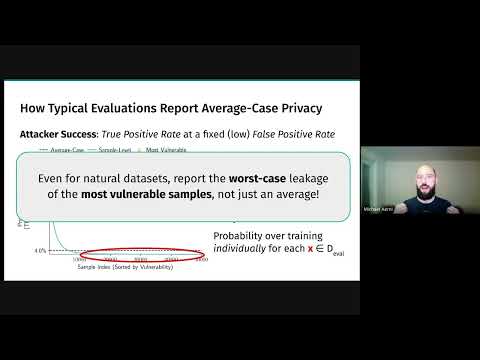

Threat Models for Memorization: Privacy, Copyright, and Everything In-Between

Relaxing threat models for machine learning memorization, even with natural data or benign users, creates unexpected privacy and copyright vulnerabilities in AI models.

Want more on ai ethics?

Explore deep-dive summaries and actionable takeaways from the best minds across different podcasts discussing this topic.

View All Ai Ethics Episodes→Don't see the episode you're looking for?

We're constantly adding new episodes, but if you want to see a specific one from Google TechTalks summarized, let us know!

Submit an Episode